How to Get a Job in the Age of AI?

December 23, 2025

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Two interesting employment related articles appeared in my newsfeeds this morning. Let’s take a quick look at each. I will try to add some humor to these write ups. Some may find them downright gloomy.

The first is “An OpenAI Exec Identifies 3 Jobs on the Cusp of Being Automated.” I want to point out that the OpenAI wizard’s own job seems to be secure from his point of view. The write up points out:

Olivier Godement, the head of product for business products at the ChatGPT maker, shared why he thinks a trio of jobs — in life sciences, customer service, and computer engineering — is on the cusp of automation.

Let’s think about each of these broad categories. I am not sure what life sciences means in OpenAI world. The term is like a giant umbrella. Customer service makes some sense. Companies were trying to ignore, terminate, and prevent any money sucking operation related to answer customer’s questions and complaints for years. No matter how lousy and AI model is, my hunch is that it will be slapped into a customer service role even if it is arguably worse than trying to understand the accent of a person who speaks English as a second or third language.

Young members of “leadership” realize that the AI system used to replace lower-level workers has taken their jobs. Selling crafts on Etsy.com is a career option. Plus, there is politics and maybe Epstein, Epstein, Epstein related careers for some. Thanks, Qwen, you just output a good enough image but you are free at this time (December 13, 2025).

Now we come to computer engineering. I assume the OpenAI person will position himself as an AI adept, which fits under the umbrella of computer engineering. My hunch is that the reference is to coders who do grunt work. The only problem is that the large language model approach to pumping out software can be problematic in some situations. That’s why the OpenAI person is probably not worrying about his job. An informed human has to be in the process of machine-generated code. LLMs do make errors. If the software is autogenerated for one of those newfangled portable nuclear reactors designed to power football field sized data centers, someone will want to have a human check that software. Traditional or next generation nuclear reactors can create some excitement if the software makes errors. Do you want a thorium reactor next to your domicile? What about one run entirely by smart software?

What’s amusing about this write up is that the OpenAI person seems blissfully unaware of the precarious financial situation that Sam AI-Man has created. When and if OpenAI experiences a financial hiccup, will those involved in business products keep their jobs. Oliver might want to consider that eventuality. Some investors are thinking about their options for Sam AI-Man related activities.

The second write up is the type I absolutely get a visceral thrill writing. A person with a connection (probably accidental or tenuous) lets me trot out my favorite trope — Epstein, Epstein, Epstein — as a way capture the peculiarity of modern America. This article is “Bill Gates Predicts That Only Three Jobs Will Be Safe from Being Replaced by AI.” My immediate assumption upon spotting the article was that the type of work Epstein, Epstein, Epstein did would not be replaced by smart software. I think that impression is accurate, but, alas, the write up did not include Epstein, Epstein, Epstein work in its story.

What are the safe jobs? The write up identifies three:

-

Biology. Remember OpenAI thinks life sciences are toast. Okay, which is correct?

-

Energy expertise

-

Work that requires creative and intuitive thinking. (Do you think that this category embraces Epstein, Epstein, Epstein work? I am not sure.)

The write up includes a statement from Bill Gates:

“You know, like baseball. We won’t want to watch computers play baseball,” he said. “So there’ll be some things that we reserve for ourselves, but in terms of making things and moving things, and growing food, over time, those will be basically solved problems.”

Several observations:

-

AI will cause many people to lose their jobs

-

Young people will have to make knick knacks to sell on Etsy or find equally creative ways of supporting themselves

-

The assumption that people will have “regular” jobs, buy houses, go on vacations, and do the other stuff organization man type thinking assumed was operative, is a goner.

Where’s the humor in this? Epstein, Epstein, Epstein and OpenAI debt, OpenAI debt, and OpenAI debt. Ho ho ho.

Stephen E Arnold, December x, 2025

Telegram News: AlphaTON, About Face

December 22, 2025

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Starting in January 2026, my team and I will be writing about Telegram’s Cocoon, the firm’s artificial intelligence push. Unlike the “borrow, buy, hype, and promise” approach of some US firms, Telegram is going a different direction. For Telegram, it is early days for smart software. The impact will be that posts in Beyond Search will decrease beginning Christmas week. The new Telegram News posts will be on a different url or service. Our preliminary tests show that a different approach won’t make much difference to the Arnold IT team. Frankly I am not sure how people will find the new service. I will post the links on Beyond Search, but with the exceptional indexing available from Bing, Google, et al, I have zero clue if these services will find our Telegram Notes.

Why am I making this shift?

Here’s one example. With a bit of fancy footwork, a publicly traded company popped into existence a couple of months ago. Telegram itself does not appear to have any connection to this outfit. However, the TON Foundation’s former president set up an outfit called the TON Strategy Co., which is listed on the US NASDAQ. Then following a similar playbook, AlphaTON popped up to provide those who believe in TONcoin a way to invest in a financial firm anchored to TONcoin. Yeah, I know that having these two public companies semi-linked to Telegram’s TON Foundation is interesting.

But even more fascinating is the news story about AlphaTON using some financial fancy dancing to link itself to Andruil. This is one of the companies familiar to those who keep track of certain Silicon Valley outfits generating revenue from Department of War contracts.

What’s the news?

The deal is off. According to “AlphaTON Capital Corp Issues Clarification on Anduril Industries Investment Program.” The word clarification is not one I would have chosen. The deal has vaporized. The write up says:

It has now come to the Company’s attention that the Anduril Industries common stock underlying the economic exposure that was contractually offered to our Company is subject to transfer restrictions and that Anduril will not consent to any such transfer. Due to these material limitations and risk on ownership and transferability, AlphaTON has made the decision to cancel the Anduril tokenized investment program and will not be proceeding with the transaction. The Company remains committed to strategic investments and the tokenization of desirable assets that provide clear ownership rights and align with shareholder value creation objectives.

I interpret this passage to mean, “Fire, Aim, Ready Maybe.”

With the stock of AlphaTON Capital as of December 18, 2025, at about $0.70 at 11 30 am US Eastern, this fancy dancing may end this set with a snappy rendition of Mozart’s Requiem.

That’s why Telegram Notes will be an interesting organization to follow. We think Pavel Durov’s trial in France, the two or maybe one surviving public company, two “foundations” linked to Telegram, and the new Cocoon AI play are going to be more interesting. If Mr. Durov goes to jail, the public company plays fail, and the Cocoon thing dies before it becomes a digital butterfly, I may flow more stories to Beyond Search.

Stay tuned.

Stephen E Arnold, December 22, 2025

Modern Management Method with and without Smart Software

December 22, 2025

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

I enjoy reading and thinking about business case studies. The good ones are few and far between. Most are predictable, almost as if the author was relying on a large language model for help.

“I’m a Tech Lead, and Nobody Listens to Me. What Should I Do?” is an example of a bright human hitting on tactics to become more effective in his job. You can work through the full text of the article and dig out the gems that may apply to you. I want to focus on two points in the write up. The first is the matrix management diagram based on or attributed to Spotify, a music outfit. The second is a method for gaining influence in a modern, let’s go fast company.

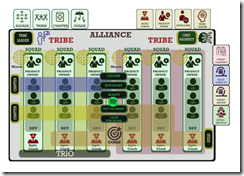

Here’s the diagram that caught my attention:

Instead of the usual business school lingo, you will notice “alliance,” “tribe,” “squad,” and “trio.” I am not sure what these jazzy words mean, but I want to ask you a question, “Looking at this matrix, who is responsible when a problem occurs?” Take you time. I did spend some time looking at this chart, and I formulated several hypotheses:

- The creator wanted to make sure that a member of leadership would have a tough time figuring out who screwed up. If you disagree, that’s okay. I am a dinobaby, and I like those old fashioned flow diagrams with arrows and boxes. In those boxes is the name of the person who has to fix a problem. I don’t know about one’s tribe. I know Problem A is here. Person B is going to fix it. Simple.

- The matrix as displayed allows a lot of people to blame other people. For example, what if the coach is like the leader of the Cleveland Browns, who brilliantly equipped a young quarterback with the incorrect game plan for the first quarter of a football game. Do we blame the coach or do we chase down a product owner? What if the problem is a result of a dependency screw up involving another squad in a different tribe? In practical terms, there is no one with direct responsibility for the problem. Again: Don’t agree? That’s okay.

- The matrix has weird “leadership” or “employment categories” distributed across the X axes at the top of the chart. What’s a chapter? What’s an alliance? What’s self organized and autonomous in a complex technical system? My view is that this is pure baloney designed to make people feel important yet shied any one person from responsibility. I bet some reading this numbered point find my statement out of line. Tough.

The diagram makes clear that the organization is presented as one that will just muddle forward. No one will have responsibility when a problem occurs? No one will know how to fix the problem without dropping other work and reverse engineering what is happening. The chart almost guarantees bafflement when a problem surfaces.

The second item I noticed was this statement or “learning” from the individual who presented the case example. Here’s the passage:

When you solve a real problem and make it visible, people join in. Trust is also built that way, by inviting others to improve what you started and celebrating when they do it better than you.

For this passage hooks into the one about solving a problem; to wit:

Helping people debug. I have never considered myself especially smart, but I have always been very systematic when connecting error messages, code, hypotheses, and system behavior. To my surprise, many people saw this as almost magical. It was not magic. It was a mix of experience, fundamentals, intuition, knowing where to look, and not being afraid to dive into third-party library code.

These two passages describe human interactions. Working with others can result in a collective effort greater than the sum of its parts. It is a human manifestation. One fellow described this a interaction efflorescence. Fancy words for what happens when a few people face a deadline and severe consequences for failure.

Why did I spend time pointing out an organizational structure purpose built to prevent assigning responsibility and the very human observations of the case study author?

The answer is, “What will happen when smart software is tossed into this management structure?” First, people will be fired. The matrix will have lots of empty boxes. Second, the human interaction will have to adapt to the smart software. The smart software is not going to adapt to humans. Don’t believe me. One smart software company defended itself by telling a court it is in our terms of service that suicide in not permissible. Therefore, we are not responsible. The dead kid violated the TOS.

How functional will the company be as the very human insight about solving real problems interfaces with software? Man machine interface? Will that be an issue in a go fast outfit? Nope. The human will be excised as a consequence of efficiency.

Stephen E Arnold, December 23, 2025

Windows Strafed by Windows Fanboys: Incredible Flip

December 19, 2025

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

When the Windows folding phone came out, I remember hunting around for blog posts, podcasts, and videos about this interesting device. Following links I bumbled onto the Windows Central Web site. The two fellows who seemed to be front and center had a podcast (a quite irregularly published podcast I might add). I was amazed at the pro-folding gizmo. One of the write ups was panting with excitement. I thought then and think now that figuring out how to fold a screen is a laboratory exercise, not something destined to be part of my mobile phone experience.

I forgot about Windows Central and the unflagging ability to find something wonderfully bigly about the Softies. Then I followed a link to this story: “Microsoft Has a Problem: Nobody Wants to Buy or Use Its Shoddy AI Products — As Google’s AI Growth Begins to Outpace Copilot Products.”

An athlete failed at his Dos Santos II exercise. The coach, a tough love type, offers the injured gymnast a path forward with Mistral AI. Thanks, Qwen, do you phone home?

The cited write up struck me as a technology aficionado pulling off what is called a Dos Santos II. (If you are not into gymnastics, this exercise “trick” involves starting backward with a half twist into a double front in the layout position. Boom. Perfect 10. From folding phone to “shoddy AI products.”

If I were curious, I would dig into the reasons for this change in tune, instruments, and concert hall. My hunch is that a new manager replaced a person who was talking (informally, of course) to individuals who provided the information without identifying the source. Reuters, the trust outfit, does this on occasion as do other “real” journalists. I prefer to say, here are my observations or my hypotheses about Topic X. Others just do the “anonymous” and move forward in life.

Here are a couple of snips from the write up that I find notable. These are not quite at the “shoddy AI products” level, but I find them interesting.

Snippet 1:

If there’s one thing that typifies Microsoft under CEO Satya Nadella‘s tenure: it’s a general inability to connect with customers. Microsoft shut down its retail arm quietly over the past few years, closed up shop on mountains of consumer products, while drifting haphazardly from tech fad to tech fad.

I like the idea that Microsoft is not sure what it is doing. Furthermore, I don’t think Microsoft every connected with its customers. Connections come from the Certified Partners, the media lap dogs fawning at Microsoft CEO antics, and brilliant statements about how many Russian programmers it takes to hack into a Windows product. (Hint: The answer is a couple if the Telegram posts I have read are semi accurate.)

Snippet 2:

With OpenAI’s business model under constant scrutiny and racking up genuinely dangerous levels of debt, it’s become a cascading problem for Microsoft to have tied up layer upon layer of its business in what might end up being something of a lame duck.

My interpretation of this comment is that Microsoft hitched its wagon to one of AI’s Cybertrucks, and the buggy isn’t able to pull the Softie’s one-horse shay. The notion of a “lame duck” is that Microsoft cannot easily extricate itself from the money, the effort, the staff, and the weird “swallow your AI medicine, you fool” approach the estimable company has adopted for Copilot.

Snippet 3:

Microsoft’s “ship it now fix it later” attitude risks giving its AI products an Internet Explorer-like reputation for poor quality, sacrificing the future to more patient, thoughtful companies who spend a little more time polishing first. Microsoft’s strategy for AI seems to revolve around offering cheaper, lower quality products at lower costs (Microsoft Teams, hi), over more expensive higher-quality options its competitors are offering. Whether or not that strategy will work for artificial intelligence, which is exorbitantly expensive to run, remains to be seen.

A less civilized editor would have dropped in the industry buzzword “crapware.” But we are stuck with “ship it now fix it later” or maybe just never. So far we have customer issues, the OpenAI technology as a lame duck, and now the lousy software criticism.

Okay, that’s enough.

The question is, “Why the Dos Santos II” at this time? I think citing the third party “Information” is a convenient technique in blog posts. Heck, Beyond Search uses this method almost exclusively except I position what I do as an abstract with critical commentary.

Let my hypothesize (no anonymous “source” is helping me out):

- Whoever at Windows Central annoyed a Softie with power created is responding to this perceived injustice

- The people at Windows Central woke up one day and heard a little voice say, “Your cheerleading is out of step with how others view Microsoft.” The folks at Windows Central listened and, thus, the Dos Santos II.

- Windows Central did what the auth9or of the article states in the article; that is, using multiple AI services each day. The Windows Central professional realized that Copilot was not as helpful writing “real” news as some of the other services.

Which of these is closer to the pin? I have no idea. Today (December 12, 2025) I used Qwen, Anthropic, ChatGPT, and Gemini. I want to tell you that these four services did not provide accurate output.

Windows Central gets a 9.0 for its flooring Microsoft exercise.

Stephen E Arnold, December 19, 2025

Waymo and a Final Woof

December 19, 2025

We’re dog lovers. Canines are the best thing on this green and blue sphere. We were sickened when we read this article in The Register about the death of a bow-wow: “Waymo Chalks Up Another Four-Legged Casualty On San Francisco Streets.”

Waymo is a self-driving car company based in San Francisco. The company unfortunately confirmed that one of its self-driving cars ran over a small, unleashed dog. The vehicle had adults and children in it. The children were crying after hearing the dog’s suffering. The status of the dog is unknown. Waymo wants to locate the dog’s family to offer assistance and veterinary services.

Waymo cars are popular in San Francisco, but…

“Many locals report feeling uneasy about the fleet of white Jaguar I-Paces roaming the city’s roads, although the offering has proven popular with tourists, women seeking safer rides, and parents in need of a quick, convenient way to ferry their children to school. Waymo currently operates in the SF Bay Area, Los Angeles, and Phoenix, and some self-driving rides are available through Uber in Austin and Atlanta.”

Waymo cars also ran over a famous stray cat named Kit Kat, known as the “Mayor of 16th Street.” May these animals rest in peace. Does the Waymo software experience regret? Yeah.

Whitney Grace, December 19, 2025

Mistakes Are Biological. Do Not Worry. Be Happy

December 18, 2025

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

I read a short summary of a longer paper written by a person named Paul Arnold. I hope this is not misinformation. I am not related to Paul. But this could be a mistake. This dinobaby makes many mistakes.

The article that caught my attention is titled “Misinformation Is an Inevitable Biological Reality Across nature, Researchers Argue.” The short item was edited by a human named Gaby Clark. The short essay was reviewed by Robert Edan. I think the idea is to make clear that nothing in the article is made up and it is not misinformation.

Okay, but…. Let’s look at couple of short statements from the write up about misinformation. (I don’t want to go “meta” but the possibility exists that the short item is stuffed full of information. What do you think?

Here’s an image capturing a youngish teacher outputting misinformation to his students. Okay, Qwen. Good enough.

Here’s snippet one:

… there is nothing new about so-called “fake news…”

Okay, does this mean that software that predicts the next word and gets it wrong is part of this old, long-standing trajectory for biological creatures. For me, the idea that algorithms cobbled together gets a pass because “there is nothing new about so-called ‘fake news’ shifts the discussion about smart software. Instead of worrying about getting about two thirds of questions right, the smart software is good enough.

A second snippet says:

Working with these [the models Paul Arnold and probably others developed] led the team to conclude that misinformation is a fundamental feature of all biological communication, not a bug, failure, or other pathology.

Introducing the notion of “pathology” adds a bit of context to misinformation. Is a human assembled smart software system, trained on content that includes misinformation and processed by algorithms that may be biased in some way is just the way the world works. I am not sure I am ready to flash the green light for some of the AI outfits to output what is demonstrably wrong, distorted, weaponized, or non-verifiable outputs.

What puzzled me is that the article points to itself and to an article by Ling Wei Kong et al, “A Brief Natural history of Misinformation” in the Journal of the Royal Society Interface.

Here’s the link to the original article. The authors of the publication are, if the information on the Web instance of the article is accurate, Ling-Wei Kong, Lucas Gallart, Abigail G. Grassick, Jay W. Love, Amlan Nayak, and Andrew M. Hein. Seven people worked on the “original” article. The three people identified in the short version worked on that item. This adds up to 10 people. Apparently the group believes that misinformation is a part of the biological being. Therefore, there is no cause to worry. In fact, there are mechanisms to deal with misinformation. Obviously a duck quack that sends a couple of hundred mallards aloft can protect the flock. A minimum of one duck needs to check out the threat only to find nothing is visible. That duck heads back to the pond. Maybe others follow? Maybe the duck ends up alone in the pond. The ducks take the viewpoint, “Better safe than sorry.”

But when a system or a mobile device outputs incorrect or weaponized information to a user, there may not be a flock around. If there is a group of people, none of them may be able to identify the incorrect or weaponized information. Thus, the biological propensity to be wrong bumps into an output which may be shaped to cause a particular effect or to alter a human’s way of thinking.

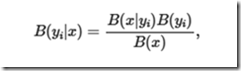

Most people will not sit down and take a close look at this evidence of scientific rigor:

and then follow the logic that leads to:

I am pretty old but it looks as if Mildred Martens, my old math teacher, would suggest the KL divergence wants me to assume some things about q(y). On the right side, I think I see some good old Bayesian stuff but I didn’t see the to take me from the KL-difference to log posterior-to-prior ratio. Would Miss Martens ask a student like me to clarify the transitions, fix up the notation, and eliminate issues between expectation vs. pointwise values? Remember, please, that I am a dinobaby and I could be outputting misinformation about misinformation.

Several observations:

- If one accepts this line of reasoning, misinformation is emergent. It is somehow part of the warp and woof of living and communicating. My take is that one should expect misinformation.

- Anything created by a biological entity will output misinformation. My take on this is that one should expect misinformation everywhere.

- I worry that researchers tackling information, smart software, and related disciplines may work very hard to prove that information is inevitable but the biological organisms can carry on.

I am not sure if I feel comfortable with the normalization of misinformation. As a dinobaby, the function of education is to anchor those completing a course of study in a collection of generally agreed upon facts. With misinformation everywhere, why bother?

Net net: One can read this research and the summary article as an explanation why smart software is just fine. Accept the hallucinations and misstatements. Errors are normal. The ducks are fine. The AI users will be fine. The models will get better. Despite this framing of misinformation is everywhere, the results say, “Knock off the criticism of smart software. You will be fine.”

I am not so sure.

Stephen E Arnold, December 18, 2025

The Google Has a New Sheep Herder: An AI Boss to Nip at the Heels of the AI Beasties

December 17, 2025

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Staffing turmoil appears to be the end-of-year trend in some Silicon Valley outfits. Apple is spitting out executives. Meta is thrashing. OpenAI is doing the Code Red alert thing amidst unsettled wizards. And, today I learned that Google has a chief technologist for AI infrastructure. I think that means data centers, but it could extend some oversight to the new material science lab in the UK that will use AI (of course) to invent new materials. “Exclusive / Google Names New Chief of AI Infrastructure Buildout” reports:

Amin Vahdat, who joined Google from academia roughly 15 years ago, will be named chief technologist for AI infrastructure, according to the memo, and become one of 15 to 20 people reporting directly to CEO Sundar Pichai. Google estimates it will have spent more than $90 billion on capital expenditures by the end of 2025, most of it going into the part of the company Vahdat will now oversee.

The sheep dog attempts to herd the little data center doggies away from environmental issues, infrastructure inconsistencies, and roll-your-own engineering. Woof. Thanks, Venice.ai. Close enough for horseshoes.

I read this as making clear the following:

- Google spent “more than $90 billion” on infrastructure in 2025

- No one was paying attention to this investment

- For 2025, a former academic steeped in Googliness will herd the sheep in 2026.

I assume that is part of the McKinsey way, Fire, Aim, Ready! Dinobabies like me with some blue chip consulting experience feel slightly more comfortable with the old school Ready, Aim, Fire! But the world today is different from the one I traveled through decades ago. Nostalgia does not cut it in the “we have to win AI” business environment today.

Here’s a quote making clear that planning and organizing were not part of the 2025 check writing. I quote:

“This change establishes AI Infrastructure as a key focus area for the company,” wrote Google Cloud CEO Thomas Kurian in the Wednesday memo congratulating Vahdat.

The cited article puts this sheep herder in context:

In August, Google disclosed in a paper co-authored by Vahdat that the amount of energy used to run the median prompt on its AI models was equivalent to watching less than nine seconds of television and consuming five drops of water. The numbers were far less than what some critics had feared and competitors had likely hoped for. There’s no single answer for how to best run an AI data center. It’s small, coordinated efforts across disparate teams that span the globe. The job of coordinating it all now has an official title.

See and understand. The power consumption for the Google AI data centers is trivial. The Google can plug these puppies into the local power grid, nip at the heels of the people who complain about rising electricity prices and brown outs, and nuzzle the folks who:

- Promise small, local nuclear power generation facilities. No problems with licensing, component engineering, and nuclear waste. Trivialities.

- Repurposed jet engines from a sort of real supersonic jet source. Noise? No problem. Heat? No problem. Emission control? No problem.

- Brand spanking new pressurized water reactors built by the old school nuclear crowd. No problem. Time? No problem. The new folks are accelerationists.

- Recommissioning turned off (deactivated) nuclear power stations. No problem. Costs? No problem. Components? No problem. Environmental concerns? Absolutely no problem.

Google is tops in strategic planning and technology. It should be. It crafted its expertise selling advertising. AI infrastructure is a piece of cake. I think sheep dogs herding AI can do the job which apparently was not done for more than a year. When a problem becomes to big to ignore, restructure. Grrr or Woof, not Yipe, little herder.

Stephen E Arnold, December 17, 2025

The EU – Google Soap Opera Titled “What? Train AI?”

December 16, 2025

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Ka-ching. That’s the sound of the EU ringing up another fine for one of its favorite US big tech outfits. Once again it is Googzilla in the headlights of a restored 2CV. Here’s the pattern:

- EU fines

- Googzilla goes to court

- EU finds Googzilla guilty

- Googzilla appeals

- EU finds Googzilla guilty

- Googzilla negotiates and says, “We don’t agree but we will pay”

- Go back to item 1.

This version of the EU soap opera is called training Gemini on whatever content Google has.

The formal announcement of Googzilla’s re-run of a fan favorite is “Commission Opens Investigation into Possible Anticompetitive Conduct by Google in the Use of Online Content for AI Purposes.” I note the hedge word “possible,” but as soap opera fans we know the arc of this story. Can you hear the cackle of the legal eagles anticipating the billings? I can.

The mythical creature Googzilla apologizes to an august body for a mistake. Googzilla is very, very sincere. Thanks, MidJourney. Actually pretty good this morning. Too bad you not consistent.

The cited show runner document says:

The European Commission has opened a formal antitrust investigation to assess whether Google has breached EU competition rules by using the content of web publishers, as well as content uploaded on the online video-sharing platform YouTube, for artificial intelligence (‘AI’) purposes. The investigation will notably examine whether Google is distorting competition by imposing unfair terms and conditions on publishers and content creators, or by granting itself privileged access to such content, thereby placing developers of rival AI models at a disadvantage.

The EU is trying via legal process to alter the DNA of Googzilla. I am fond of pointing out that beavers do what beavers do. Similarly Googzillas do exactly what the one and unique Googzilla does; that is, anything it wants to do. Why? Googzilla is now entering its prime. It has a small would on its knee. If examined closely, it is a scar that seems to be the word “monopoly”.

News flash: Filing legal motions against Googzilla will not change its DNA. The outfit is purpose built to keep control of its billions of users and keep the snoops from do gooder and regulatory outfits clueless about what happens to the [a] parsed and tagged data, [b] the metrics thereof, [c] the email, the messages, and the voice data, [d] the YouTube data, and [e] whatever data flows into the Googzilla’s maw from advertisers, ad systems, and ad clickers.

The EU does not get the message. I wrote three books about Google, and it was pretty evident in the first one (The Google Legacy) that baby Google was the equivalent of a young Maradona or Messi was going to wear a jersey with Googzilla 10 emblazoned on its comely yet spikey back.

The write up contains this statement from Teresa Ribera, Executive Vice-President for Clean, Just and Competitive Transition:

A free and democratic society depends on diverse media, open access to information, and a vibrant creative landscape. These values are central to who we are as Europeans. AI is bringing remarkable innovation and many benefits for people and businesses across Europe, but this progress cannot come at the expense of the principles at the heart of our societies. This is why we are investigating whether Google may have imposed unfair terms and conditions on publishers and content creators, while placing rival AI models developers at a disadvantage, in breach of EU competition rules.

Interesting idea as the EU and the US stumble to the side of street where these ideas are not too popular.

Net net: Googzilla will not change for the foreseeable future. Furthermore, those who don’t understand this are unlikely to get a job at the company.

Stephen E Arnold, December 16, 2025

How Not to Get a Holiday Invite: The Engadget Method

December 15, 2025

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Sam AI-Man may not invite anyone from Engadget to a holiday party. I read “OpenAI’s House of Cards Seems Primed to Collapse.” The “house of cards” phrase gives away the game. Sam AI-Man built a structure that gravity or Google will pull down. How do I know? Check out this subtitle:

In 2025, it fell behind the one company it couldn’t lose ground to: Google.

The Google. The outfit that shifted into Red Alert or whatever the McKinsey playbook said to call an existential crisis klaxon. The Google. Adjudged a monopoly getting down to work other than running and online advertising system. The Google. An expert in reorganizing a somewhat loosely structured organization. The Google: Everyone except the EU and some allegedly defunded YouTube creators absolutely loves. That Google.

Thanks Venice.ai. I appreciate your telling me I cannot output an image with a “young programmer.” Plugging in “30 year old coder” worked. Very helpful. Intelligent too.

The write up points out:

It’s safe to say GPT-5 hasn’t lived up to anyone’s expectations, including OpenAI’s own. The company touted the system as smarter, faster and better than all of its previous models, but after users got their hands on it, they complained of a chatbot that made surprisingly dumb mistakes and didn’t have much of a personality. For many, GPT-5 felt like a downgrade compared to the older, simpler GPT-4o. That’s a position no AI company wants to be in, let alone one that has taken on as much investment as OpenAI.

Did OpenAI suck it up and crank out a better mouse trap? The write up reports:

With novelty and technical prowess no longer on its side though, it’s now on Altman to prove in short order why his company still deserves such unprecedented levels of investment.

Forget the problems a failed OpenAI poses to investors, employees, and users. Sam AI-Man now has an opportunity to become the highest profile technology professional to cause a national and possibly global recession. Short of war mongering countries, Sam AI-Man will stand alone. He may end up in a museum if any remain open when funding evaporate. School kids could read about him in their history books; that is, if kids actually attend school and read. (Well, there’s always the possibility of a YouTube video if creators don’t evaporate like wet sidewalks when the sun shines.)

Engadget will have to find another festive event to attend.

Stephen E Arnold, December 15, 2025

The Waymo Trip: From Cats and Dogs Waymo to the Parking Lot

December 12, 2025

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

I am reasonably sure that Google Waymo offers “way more” than any other self driving automobile. It has way more cameras. It has way more publicity. Does it have way more safety than — for instance, a Tesla confused on Highway 101? I don’t know.

I read “Waymo Investigation Could Stop Autonomous Driving in Its Tracks.” The title was snappy, but the subtitle was the real hook:

New video shows a dangerous trend for Waymo autonomous vehicles.

What’s the trend?

Weeks ago, the Austin Independent School District noticed a disturbing trend: Waymo vehicles were not stopping for school buses that had their crossing guard and stop sign deployed.

Oh, Google Waymo smart cars don’t stop for school buses. Kids always look before jumping off a school and dashing across a street to see their friends or rush home to scroll Instagram. Smart software definitely can predict the trajectories of school kids. Well, probability is involved, so there is a teeny tiny chance that a smart car might do the “kill the Mission District” cat. But the chance is teeny tiny.

Thanks, Venice.ai. Good enough.

The write up asserts:

The Austin ISD has been in communication with Waymo regarding the violations, which it reports have occurred approximately 1.5 times per week during this school year. Waymo has informed them that software updates have been issued to address the issue. However, in a letter dated November 20, 2025, the group states that there have been multiple violations since the supposed fix.

What’s with these people in Austin? Chill. Listen to some country western music. Think about moving back to the Left Coast. Get a life.

Instead of doing the Silicon Valley wizardly thing, Austin showed why Texas is not the center of AI intelligence and admiration. The story says:

On Dec. 1, after Waymo received its 20th citation from Austin ISD for the current school year, Austin ISD decided to release the video of the previous infractions to the public. The video shows all 19 instances of Waymo violating school bus safety rules. Perhaps most alarmingly, the violations appear to worsen over time. On November 12, a Waymo vehicle was recorded violating a law by making a left turn onto a street with a school bus, its stop signs and crossbar already deployed. There are children in the crosswalk when the Waymo makes the turn and cuts in front of them. The car stops for a second then continues without letting the kids pass.

Let’s assume that after 16 years of development and investment, the Waymo self driving software intelligence gets an F in school bus recognition. Conjuring up a vehicle that can doddle down 101 at rush hour driven by a robot is a Silicon Valley inspiration. Imagine. One can sit in the automobile, talk on the phone, fiddle with a laptop, or just enjoy coffee and a treat from Philz in peace. Just ignore the imbecilic drivers in other automobiles. Yes, let’s just think it and it will become real.

I know the idea sounds great to anyone who has suffered traffic on 101 or the Foothills, but crushing the Mission District stray cat is just a warm up. What type of publicity heat will maiming Billy or Sally whose father might be a big time attorney who left Seal Team 6 to enforce and defend city, county, state, and federal law? Cats don’t have lawyers. The parents of harmed children either do or can get one pretty easily.

Getting a lawyer is much easier than delivering on a dream that is a bit of nightmare after 16 years and an untold amount of money. But the idea is a good one. Sort of.

Stephen E Arnold, December 12, 2025