Why Emulating Oxford University in the US Is an Errand for Fools

June 11, 2025

Just a dinobaby and no AI: How horrible an approach?

Just a dinobaby and no AI: How horrible an approach?

I read an essay with the personal touches I admire in writing: A student sleeping on the floor, an earnest young man eating KY fry on a budget airline, and an individual familiar with Laurel and Hardy comedies. This person write an essay, probably by hand on a yellow tablet with an ink pen titled “5 Ways to Stop AI Cheating.”

What are these five ways? The ones I noted are have rules and punish violators. Humiliation in front of peers is a fave. Presumably these students did not have weapons or belong to a street gang active in the school. The other five ways identified in the essay are:

- Handwrite everything. No typewriters, no laser printers, and no computers. (I worked with a fellow on a project for Persimmon IT which did some work on the DEC Alpha, and he used computers. (Handwriting was a no go for interacting with the DECs equipped with the “hot” chip way back when.)

- Professors interact with a student and talk or interrogate the young scholar to be

- Examinations were oral or written. One passed or failed. None of this namby pamby “gentleman’s C” KY fry stuff

- Inflexibility about knowing or not knowing. Know and one passes. Not knowing one becomes a member of Parliament or a titan of industry

- No technology. (I would not want to suggest that items one and five are redundant and that would be harshly judged by some of my less intellectually gifted teachers at assorted so-so US institutions of inferior learning.

Now let’s think about the fool’s errand. The US is definitely a stratified society, just like the UK. If one is a “have,” life is going to be much easier than if one is a “have not.” Why? Money, family connections, exposure to learning opportunities, possibly tutors, etc. In the US, technology is ubiquitous. I do not want to repeat myself, so a couple of additional thoughts will appear in item five below.

Next, grilling a student one on one is something that is an invitation to trouble. A student with hurt feelings need only say, “He/she is not treating me fairly.” Bingo. Stuff happens. I am not sure about a one on one in a private space would be perceived by a neutral third party. If one has to meet, meet in a public place.

Third, writing in blue books poses two problems. The first is that the professor has to read what the student has set forth in handwriting. Second, many students can neither write legible cursive or print out letters in an easily recognizable form. These are hurdles in the US. Elsewhere, I am not sure.

Fourth, inflexibility is a characteristic of some factions in the US. However, helicopter parents and assorted types of “outrage” can make inflexibility for a public servant a risky business. If Debbie is a dolt, one must find a way to be flexible if her parents are in the upper tier of American economic strata. Inflexibility means litigation or some negative networking or a TikTok video.

Finally, the problem with the no-tech approach is that it just won’t work. Consider smart software. Teachers use it and have LLMs fix up “original research.” Students use it to avoid reading and writing. Some schools ban mobile devices. Care to try that at an American university when shooters can prowl the campus?

The essay, like the fantasies of people who want to live like those in Florence in the 15th century are nuts. Pestilence, poverty, filth, violence, and big time corruption— there were everyday companions.

Cheating is here to stay. Politician is a code word for crook. Faculty (at least at Harvard) is the equivalent of bad research. Students are the stuff of YouTube shorts. Writing in blue books? A trend which may not have the staying power of Oxford’s stasis. I do like the bookstore, however.

Stephen E Arnold, June 11, 2025

Education in Angst: AI, AI, AI

June 9, 2025

Just a dinobaby and no AI: How horrible an approach?

Just a dinobaby and no AI: How horrible an approach?

Bing Crosby went through a phase in which ai, ai, ai was the groaner’s fingerprint. Now, it is educated adults worrying about smart software. AI, AI, AI. “An Existential Crisis: Can Universities Survive ChatGPT?” The sub-title is pure cubic Zirconia:

Students are using AI to cheat and professors are struggling to keep up. If an AI can do all the research and writing, what is the point of a degree?

I can answer this question. The purpose of a college degree is, in order of importance, [1] get certified as having been accepted to and participated in a university’s activities, [2] have fun, including but not limited to drinking, sex, and intramural sports, [3] meeting friends who are likely to get high paying jobs, start companies, or become powerful political figures. Notice that I did not list reading, writing, and arithmetic. A small percentage of college attendees will be motivated, show up for class, do homework, and possibly discover something of reasonable importance. The others? These will be mobile phone users, adepts with smart software, and equipped with sufficient funds to drink beer and go on spring break trips.

The cited article presents this statement:

Research by the student accommodation company Yugo reveals that 43 per cent of UK [United Kingdom] university students are using AI to proofread academic work, 33 per cent use it to help with essay structure and 31 per cent use it to simplify information. Only 2 per cent of the 2,255 students said they used it to cheat on coursework.

I thought the Yugo was a quite terrible automobile, but by reading this essay, I learned that the name “Yugo” refers to a research company. (When it comes to auto names, I quite like “No Va” or no go in Spanish. No, I did consult ChatGPT for this translation.)

The write up says:

Universities are somewhat belatedly scrambling to draw up new codes of conduct and clarifying how AI can be used depending on the course, module and assessment.

Since when did academic institutions respond with alacrity to a fresh technical service? I would suggest that the answer to this question is, “Never.”

The “existential crisis” lingo appears to be the non-AI powered former vice chancellor of the University of Buckingham (Buckinghamshire, England) located near River Great Ouse. (No, I did not need smart software to know the name of this somewhat modest “river.”)

What is an existential crisis? I have to dredge up a recollection of Dr. Francis Chivers’ lecture on the topic in the 1960s. I think she suggested something along the lines: A person is distressed about something: Life, its purpose, or his/her identity.

A university is not a person and, therefore, to my dinobaby mind, not able to have an existential crisis. More appropriately, those whose livelihood depends on universities for money, employment, a peer group, social standing, or just feeling like scholarship has delivered esteem, are in crisis. The university is a collection of buildings and may have some quantum “feeling” but most structures are fairly reticent to offer opinions about what happens within their walls.

I quibble. The worriers about traditional education should worry. One of those “move fast, break things” moments has arrived to ruin the sleep of those collecting paychecks from a university. Some may worry that their side gig may be put into financial squalor. Okay, worry away.

What’s the fix, according to the cited essay? Ride out the storm, adapt, and go to meetings.

I want to offer a handful of observations:

- Higher education has been taking karate chops since Silicon Valley started hiring high school students and suggesting they don’t need to attend college. Examples of what can happen include Bill Gates and Mark Zuckerberg. “Be like them” is a siren song for some bright sparks.

- University professional have been making up stuff for their research papers for years. Smart software has made this easier. Peer review by pals became a type of search engine optimization in the 1980s. How do I know this? Gene Garfield told me in 1981 or 1983. (He was the person who pioneered link analysis in sci-tech, peer reviewed papers and is, therefore, one of the individuals who enabled PageRank.

- Universities in the United States have been in the financial services business for years. Examples range from student loans to accepting funds for “academic research.” Certain schools have substantial income from these activities which do not directly transfer to high quality instruction. I myself was a “research fellow.” I got paid to do “work” for professors who converted my effort into consulting gigs. Did I mind? I had zero clue that I was a serf. I thought I was working on a PhD.* Plus, I taught a couple of classes if you could call what I did “teaching.” Did the students know I was clueless? Nah, they just wanted a passing grade and to get out of my 4 pm Friday class so they could drink beer.

Smart software snaps in quite nicely to the current college and university work flow. A useful instructional program will emerge. However, I think only schools with big reputations and winning sports teams will be the beacons of learning in the future. Smart software has arrived, and it is not going to die quickly even if it hallucinates, costs money, and generates baloney.

Net net: Change is not coming. Change has arrived.

——————–

* Note: I did not finish my PhD. I went to work at Hallilburton’s nuclear unit. Why? Answer: Money. Should I have turned in my dissertation? Nah, it was about Chaucer, and I was working on kinetic weapons. Definitely more interesting to a 23 year old.

Stephen E Arnold, June 9, 2025

Information Filtering with Mango Chutney, Please

May 30, 2025

Censorship is having a moment. And not just in the US. For example, India’s The Wire laments, “Academic Censorship Has Become the Norm in Indian Universities.” Writer Apoorvanand, who teaches at Dheli University, describes his experience when a seminar he was to speak at was “postponed.” See the article for the details, like the importance and difficulty of bringing together a diverse panel. Or the college principal who informed speakers the event was off without notifying its organizer, Apoorvanand’s colleague. He writes:

“It was a breach of trust and a personal humiliation, my colleague fumed. Of course the problematic speaker would not know the story but he knew what was the real reason. He said that principals today only want one type of speaker to be invited. The non-problematic ones. Was it only about an individual? No. My friend felt that it went beyond that. There is an attempt to disallow discussion on topics which can make students think. Any seminars which would expose the students to different ways of looking at a problem and making their own decision are not permitted. For the last 10 years we see only one kind of meets being held in the colleges. They cannot be called academic and intellectual fora. They are platforms created for propaganda for the regime and one kind of ‘Indianness’ or ‘nationalism.’ If you do a survey of the topics across colleges, you would find a monotonous similarity. It is a campaign to indoctrinate young people. For it to succeed, the authorities keep other voices and ideas out of the reach of the students.”

Despite the organizer’s intent to not single out the “problematic” participant, the individual knew. Apoorvanand spoke to him and learned cancellations are now a common occurrence for him. And, he added, a growing list of his colleagues. Neither is this pattern limited to Dheli University. We learn:

“When I told [other teachers] about this, they opened up. Some of them were from ‘elite’ universities like Ashoka or Krea and Azim Premji University. There too the authorities have become very cautious. Names of the speakers have to be cleared by the authorities. There is an order in one university to share the slides the speakers would use three days before the event. The teachers are also cautioned against going to places that could upset the regime or accepting invitations from people who are considered to be its critics.”

At Indian universities both public and private, Apoorvanand writes, censorship is now the norm a bit like mango chutney.

Cynthia Murrell, May 30, 2025

Now a Magazine Figures Out Why Its Circulation Sucks: Clueless People Do Not Subscribe

April 24, 2025

Sadly I am a dinobaby and too old and stupid to use smart software to create really wonderful short blog posts.

Sadly I am a dinobaby and too old and stupid to use smart software to create really wonderful short blog posts.I read an essay in a business magazine much loved by those former colleagues I enjoyed at the blue-chip consulting firm where once I worked as a jejune dinobaby. The publication called itself a “newspaper,” but it looked like a magazine to me. The tone was a bit more breezy than the documents cranked out by the blue-chip consulting firm. But the general approach was the same: We know so much more than you. We can, therefore, explain the basics of India’s poverty, the new jet engine from Rolls Royce, or the mysteries of the US home loan system.

That “newspaper” is the Economist. Don’t get me wrong. Like the approach of the British debate teams, my colleague and I faced in college, the faces change but the tone and attitude persists. Too bad we won more debate competitions against British teams than we lost, and it sure wasn’t because we were smarmy.

Not surprisingly I read “Too Many Adults Are Absolutely Clueless.” Yep, the same fingerprints appeared on this story. The main idea is that “adults” — you are supposed to insert fat Americans for this token — are stupid. Okay, I am not sure this is news to anyone who has bumped into students in America. I grew up in a bubble. Lucky me. Then for one year I found myself teaching in a US high school in a “poor section” of a Midwestern city.

Guess what?

On day one I figured out that the majority of the students in my charge were not what my life experiences taught me to view as “smart.” When was this? Last month, five years ago? Nope. I did this one year’s work in 1968 when I switched from the PhD program at Duquesne University to the University of Illinois so I could study with one of my mentors. As I recall, the students in the program which I was hired to “manage” and teach was called “The CWS Program” or Cooperative Work Study Program. I won’t go into details, but here is a quick snapshot of what I learned almost a half century ago:

- The students, aged 14 to 17, came from homes in which two parents were exceptions

- The students were unable to read the provided text books and, as my team learned, the sports pages and funny papers were out of reach.

- Many of the students came to school to fool around or to fill time when there weren’t more interesting things to do in the area in which they lived or slept

- The students in the CWS program were there because they had brushes with the law and were released to their parent or guardian from a detention center if they agreed to go to school and participate in the CWS program. (Translation: Most were guilty of minor crimes like shoplifting clothes and food. A few were guilty of assault and gang related activities)

- The academic level of these students —- buckle up, buttercup — was not significantly different from the performance of the students in the non-CWS population of this particular high school.

Now in the spring of 2025 I learn that the Economist, a publication which has been fighting to keep up its paid circulation to blue-chip consultant types and their ilk, has discovered that American students and young adults lack basic skills.

What? Where have you newspaper ostriches been for the last 50 years? Perhaps the folks at the down-home Economist have not visited Blackpool, England, lately. That’s an example of what’s shaking in the intellectually thriving atmosphere of the sceptered isle.

The write up asks:

Need to change a tyre or file your taxes? In America, “adulting” courses can help

The courses won’t help. The problems in education and “adulting” have anchored their roots deep in society. A couple of classes will not fill in what is missed when:

- Families remain intact

- Kids and adults have enough to eat and a place to sleep

- A supporting, learning-oriented social environment.

Today’s information flows have simply accelerated the erosion of learning “basic things” like threading a needle, putting oil in a vehicle, and understanding that credit card bills have to be paid with actual fiat currency. (Visa UK is keen on hooking a credit card to crypto currency, so good luck with that.)

Now why doesn’t the Economist have a larger and fast growing subscriber base? The number of individuals who can make sense of the articles is a tiny percentage of a large set of potential subscribers. Like learning how to fix a broken dish, semi-esoteric writing is too much work.

If you want to reach people, make a short video and post it on TikTok. If you want to catch my attention, write about something that is not exactly a recycling of the modern equivalent of ancient history.

China has a lot more fun pointing out the problems of the US with its aggressive China Smart, US Dumb content marketing than writing about a class in Austin, Texas.

Net net: Yeah, grow up. Plus I would add, “Write about fixing up Blackpool while considering who is clueless.”

Stephen E Arnold, April 24, 2025

Smart Software Exploits Direct Tuition Payment. Sure, the Fraud Is Automated

April 22, 2025

No AI, just the dinobaby himself.

No AI, just the dinobaby himself.

The Voice of San Diego published “As Bot Students Continue to Flood In, Community Colleges Struggle to Respond.” The write up is one of those recipes that “real” news outfits provide to inform their readers about a crime. When I worked through the article, my reaction was, “The process California follows for community college student assistance is a big juicy sandwich on a picnic table in the park on a warm summer day.”

Will the insects flock to the sandwich?

Absolutely. Plus, telling the insects where the sandwich is and the basics of getting their mandibles on that sandwich does one thing: Provide an easy-to-follow set of instructions for a bad actor to follow.

The write up says:

Kevin Alston, a business professor who has taught at Southwestern for nearly 20 years, has stumbled across even more troubling incidents. During a prior semester, he actually called some of the students who were enrolled in his classes but had not submitted any classwork. “One student said ‘I’m not in your class. I’m not even in the state of California anymore’” Alston recalled. The student told him they had been enrolled in his class two years ago but had since moved on to a four-year university out of state. “I said, ‘Oh, then the robots have grabbed your student ID and your name and re-enrolled you at Southwestern College. Now they’re collecting financial aid under your name,’” Alston said.

The opportunity for fraud is a result of certain rules and regulations that require that financial aid be paid directly to the “student.” Enroll as a fake student and get a chunk of money. The more fake students that apply and receive aid, the more money the fake students receive.

California appears to be taking steps to reduce the fraud.

Several observations:

- A basket of rules and regulations appear to create this fraud opportunity

- Smart software in the hands of clever individuals allows the bad actors to collect money. (I am not sure how one cashes multiple checks made out to a fake person, but obviously there are ways around this problem. Are those nifty automatic teller machine deposits an issue?)

- The problem, according to the write up, has been known and getting larger since 2021.

I must admit that I think about online fraud in the hands of pig butchering outfits in the Golden Triangle. The fake student scam sounds like a smaller scale operation. Making a teacher the one who must identify the fake student does not seem to be working.

Okay, let’s see what the great state of California does to resolve this problem. Perhaps the instructors need to attend online classes in fraud detection, apply for financial aid, and get an extra benefit for this non-teaching work? Will community college teachers make good cyber investigators? Sure, especially those teaching history, social science, and literature classes.

Stephen E Arnold, April 22, 2025

Survey: Kids and AI Tools

March 12, 2025

Our youngest children are growing up alongside AI. Or, perhaps, it would be more accurate to say increasingly intertwined with it. Axios tells us, "Study Zeroes in on AI’s Youngest Users." Write Megan Morrone cites a recent survey from Common Sense Media that examined AI use by children under 8 years old. The researchers surveyed 1,578 parents last August. We learn:

"Even the youngest of children are experimenting with a rapidly changing technology that could reshape their learning and critical thinking skills in unknown ways. By the numbers: One in four parents of kids ages 0-8 told Common Sense their children are learning critical thinking skills from using AI.

- 39% of parents said their kids use AI to ‘learn about school-related material,’ while only 8% said they use AI to ‘learn about AI.’

- For older children (ages 5-8) nearly 40% of parents said their child has used an app or a device with AI to learn.

- 24% of children use AI for ‘creative content,’ like writing short stories or making art, according to their parents."

It is too soon to know the long-term effects of growing up using AI tools. These kids are effectively subjects in a huge experiment. However, we already see indications that reliance on AI is bad for critical thinking skills. And that research is on adults, never mind kids whose base neural pathways are just forming. Parents, however, seem unconcerned. Morrone reports:

- More than half (61%) of parents of kids ages 0-8 said their kids’ use of AI had no impact on their critical thinking skills.

- 60% said there was no impact on their child’s well-being.

- 20% said the impact on their child’s creativity was ‘mostly positive.’

Are these parents in denial? They cannot just be happy to offload parenting to algorithms. Right? Perhaps they just need more information. Morrone points us to EqualAI’s new AI Literacy Initiative but, again, that resource is focused on adults. The write-up emphasizes the stakes of this great experiment on our children:

‘Our youngest children are on the front lines of an unprecedented digital transformation,’ said James P. Steyer, founder and CEO of Common Sense.

‘Addressing the impact of AI on the next generation is one of the most pressing issues of our time,’ Miriam Vogel, CEO of EqualAI, told Axios in an email. ‘Yet we are insufficiently developing effective approaches to equip young people for a world where they are both using and profoundly affected by AI.’

What does this all mean for society’s future? Stay tuned.

Cynthia Murrell, March 12, 2025

Who Knew? AI Makes Learning Less Fun

February 14, 2025

Bill Gates was recently on the Jimmy Fallon show to promote his biography. In the interviews Gates shared views on AI stating that AI will replace a lot of jobs. Fallon hoped that TV show hosts wouldn’t be replaced and he probably doesn’t have anything to worry about. Why? Because he’s entertaining and interesting.

Humans love to be entertained, but AI just doesn’t have the capability of pulling it off. Media And Learning shared one teacher’s experience with AI-generated learning videos: “When AI Took Over My Teaching Videos, Students Enjoyed Them Less But Learned The Same.” Media and Learning conducted an experiment to see whether students would learn more from teacher-made or AI-generated videos. Here’s how the experiment went:

“We used generative AI tools to generate teaching videos on four different production management concepts and compared their effectiveness versus human-made videos on the same topics. While the human-made videos took several days to make, the analogous AI videos were completed in a few hours. Evidently, generative AI tools can speed up video production by an order of magnitude.”

The AI videos used ChatGPT written video scripts, MidJourney for illustrations, and HeyGen for teacher avatars. The teacher-made videos were made in the traditional manner of teachers writing scripts, recording themselves, and editing the video in Adobe Premier.

When it came to students retaining and testing on the educational content, both videos yielded the same results. Students, however, enjoyed the teacher-made videos over the AI ones. Why?

“The reduced enjoyment of AI-generated videos may stem from the absence of a personal connection and the nuanced communication styles that human educators naturally incorporate. Such interpersonal elements may not directly impact test scores but contribute to student engagement and motivation, which are quintessential foundations for continued studying and learning.”

Media And Learning suggests that AI could be used to complement instruction time, freeing teachers up to focus on personalized instruction. We’ll see what happens as AI becomes more competent, but we can rest easy for now that human engagement is more interesting than algorithms. Or at least Jimmy Fallon can.

Whitney Grace, February 14, 2025

A New Year Alert: Americans Cannot Read

January 1, 2025

The United States is a large country with a self-contained nature. Because of its monolith status, the United States is very isolated. The rest of the world views the US as a stupid country and NBC News shares evidence to that statement: “Survey: Growing Number Of U.S. Adults Lack Literacy Skills.” The National Center for Education Statistics (NCES) reported that the gap between high-skilled readers and kid-skilled immensely increased from 19% in 2017 to 28% in 2023.

The substantial difference doesn’t bode well for the US, but when it is compared to the countries the US faired well. The US’s scores stayed even according to the Survey of Adult Skills. This test surveyed over two dozen countries and many of them are members of the Organization for Economic Cooperation and Development. The survey measures the working-age population’s literacy, number, and problem-solving skills. Most of the countries, including European and Asian countries, had comparable results to the US.

The greatest surprises were that Japan saw a 4% increase from 5% to 9%, England remained the same at 17%, Singapore jumped from 26% to 30%, Germany saw a spike from 18% to 20%. The biggest changes were in South Korea and Lithuania. Both countries went from the teens to thirty percent or higher.

This doesn’t mean the US and other nations are idiots (arguably):

“Low scores don’t equal illiteracy, [NCES Commissioner Peggy Carr] said — the closest the survey comes to that is measuring those who could be called functionally illiterate, which is the inability to read or write at a level at which you’re able to handle basic living and workplace tasks.

Asked what could be causing the adult literacy decline in the U.S., Carr said, ’It is difficult to say.’”

The Internet and lack of reading is the cause, dingbat!

Whitney Grace, January 1, 2025

The US and Math: Not So Hot

January 1, 2025

In recent decades, the US educational system has increasingly emphasized teaching to the test over niceties like critical thinking and deep understanding. How is that working out for us? Not well. Education news site Chalkbeat reports, "U.S. Math Scores Drop on Major International Test."

Last year, the Trends in International Mathematics and Science Study assessed over 650,000 fourth and eighth graders in 64 countries. The test is performed every four years, and its emphasis is on foundational skills in those subjects. Crucial knowledge for our young people to have, not just for themselves but for the future of the country. That future is not looking so good. The write-up includes a chart of the rankings, with the U.S. now squarely in the middle. We learn:

"U.S. fourth graders saw their math scores drop steeply between 2019 and 2023 on a key international test even as more than a dozen other countries saw their scores improve. Scores dropped even more steeply for American eighth graders, a grade where only three countries saw increases. The declines in fourth grade mathematics in the U.S. were among the largest in the participating countries, though American students are still in the middle of the pack internationally. The extent of the decline seems to be driven by the lowest performing students losing more ground, a worrying trend that predates the pandemic."

So we can’t just blame this on the pandemic, when schools were shuttered and students "attended" classes remotely. A pity. The results are no surprise to many who have been sounding alarm bells for years. So why not just drop perpetual testing and return to more effective instruction? It couldn’t have anything to do with corporate interests, could it? Naw, even the jaded and powerful must know the education of our youth is too important to put behind profits.

Cynthia Murrell, January 1, 2024

Smart Software and Knowledge Skills: Nothing to Worry About. Nothing.

July 5, 2024

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

This essay is the work of a dinobaby. Unlike some folks, no smart software improved my native ineptness.

I read an article in Bang Premier (an estimable online publication with which I had no prior knowledge). It is now a “fave of the week.” The story “University Researchers Reveal They Fooled Professors by Submitting AI Exam Answers” was one of those experimental results which caused me to chuckle. I like to keep track of sources of entertaining AI information.

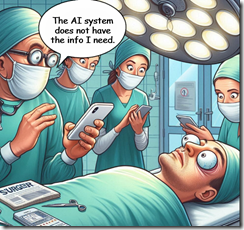

A doctor and his surgical team used smart software to ace their medical training. Now a patient learns that the AI system does not have the information needed to perform life-saving surgery. Thanks, MSFT Copilot. Good enough.

The Bang Premier article reports:

Researchers at the University of Reading have revealed they successfully fooled their professors by submitting AI-generated exam answers. Their responses went totally undetected and outperformed those of real students, a new study has shown.

Is anyone surprised?

The write up noted:

Dr Peter Scarfe, an associate professor at Reading’s school of psychology and clinical language sciences, said about the AI exams study: “Our research shows it is of international importance to understand how AI will affect the integrity of educational assessments. “We won’t necessarily go back fully to handwritten exams, but the global education sector will need to evolve in the face of AI.”

But the knee slapper is this statement in the write up:

In the study’s endnotes, the authors suggested they might have used AI to prepare and write the research. They stated: “Would you consider it ‘cheating’? If you did consider it ‘cheating’ but we denied using GPT-4 (or any other AI), how would you attempt to prove we were lying?” A spokesperson for Reading confirmed to The Guardian the study was “definitely done by humans”.

The researchers may not have used AI to create their report, but is it possible that some of the researchers thought about this approach?

Generative AI software seems to have hit a plateau for technology, financial, or training issues. Perhaps those who are trying to design a smart system to identify bogus images, machine-produced text and synthetic data, and nifty videos which often look like “real” TikTok-type creations will catch up? But if the AI innovators continue to refine their systems, the “AI identifier” software is effectively in a game of cat-and-mouse. Reacting to smart software means that existing identifiers will be blind to the new systems’ outputs.

The goal is a noble one, but the advantage goes to the AI companies, particularly those who want to go fast and break things. Academics get some benefit. New studies will be needed to determine how much fakery goes undetected. Will a surgeon who used AI to get his or her degree be able to handle a tricky operation and get the post-op drugs right?

Sure. No worries. Some might not think this is a laughing matter. Hey, it’s AI. It is A-Okay.

Stephen E Arnold, July 5, 2024