Google Fireworks: No Boom, Just Ka-ching from the EU Regulators

July 7, 2025

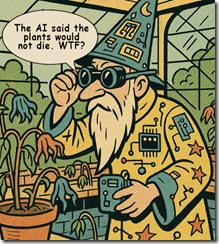

No smart software to write this essay. This dinobaby is somewhat old fashioned.

No smart software to write this essay. This dinobaby is somewhat old fashioned.

The EU celebrates the 4th of July with a fire cracker for the Google. No bang, just ka-ching, which is the sound of the cash register ringing … again. “Exclusive: Google’s AI Overviews Hit by EU Antitrust Complaint from Independent Publishers.” The trusted news source which reminds me that it is trustworthy reports:

Alphabet’s Google has been hit by an EU antitrust complaint over its AI Overviews from a group of independent publishers, which has also asked for an interim measure to prevent allegedly irreparable harm to them, according to a document seen by Reuters. Google’s AI Overviews are AI-generated summaries that appear above traditional hyperlinks to relevant webpages and are shown to users in more than 100 countries. It began adding advertisements to AI Overviews last May.

Will the fine alter the trajectory of the Google? Answer: Does a snowball survive a fly by of the sun?

Several observations:

- Google, like Microsoft, absolutely has to make its smart software investments pay off and pay off in a big way

- The competition for AI talent makes fat, confused ducks candidates for becoming foie gras. Mr. Zuckerberg is going to buy the best ducks he can. Sports and Hollywood star compensation only works if the product pays off at the box office.

- Google’s “leadership” operates as if regulations from mere governments are annoyances, not rules to be obeyed.

- The products and services appear to be multiplying like rabbits. Confusion, not clarity, seems to be the consequence of decisions operating without a vision.

Is there an easy, quick way to make Google great again? My view is that the advertising model anchored to matching messages with queries is the problem. Ad revenue is likely to shift from many advertisers to blockbuster campaigns. Up the quotas of the sales team. However, the sales team may no longer be able to sell at a pace that copes with the cash burn for the alleged next big thing, super intelligence.

Reuters, the trusted outfit, says:

Google said numerous claims about traffic from search are often based on highly incomplete and skewed data.

Yep, highly incomplete and skewed data. The problem for Google is that we have a small tank of nasty cichlids. In case you don’t have ChatGPT at hand, a cichlid is fish that will kill and eat its children. My cichlids have names: Chatty, Pilot girl, Miss Trall, and Dee Seeka. This means that when stressed or confined our cichlids are going to become killers. What happens then?

Stephen E Arnold, July 7, 2025

Publishers Will Love Off the Wall by Google

June 27, 2025

![Dino 5 18 25_thumb[3]_thumb Dino 5 18 25_thumb[3]_thumb](https://www.arnoldit.com/wordpress/wp-content/uploads/2025/06/Dino-5-18-25_thumb3_thumb_thumb.gif) No smart software involved just an addled dinobaby.

No smart software involved just an addled dinobaby.

Ooops. Typo. I meant “offerwall.” My bad.

Google has thrown in the towel on the old-school, Backrub, Clever, and PageRank-type of search. A comment made to me by a Xoogler in 2006 was accurate. My recollection is that this wizard said, “We know it will end. We just don’t know when.” I really wish I could reveal this person, but I signed a never-talk document. Because I am a dinobaby, I stick to the rules of the information highway as defined by a high-fee but annoying attorney.

How do I know the end has arrived? Is it the endless parade of litigation? Is it the on-going revolts of the Googlers? Is it the weird disembodied management better suited to general consulting than running a company anchored in zeros and ones?

No.

I read “As AI Kills Search Traffic, Google Launches Offerwall to Boost Publisher Revenue.” My mind interpreted the neologism “offerwall” as “off the wall.” The write up reports as actual factual:

Offerwall lets publishers give their sites’ readers a variety of ways to access their content, including through options like micro payments, taking surveys, watching ads, and more. In addition, Google says that publishers can add their own options to the Offerwall, like signing up for newsletters.

Let’s go with “off the wall.” If search does not work, how will those looking for “special offers” find them. Groupon? Nextdoor? Craigslist? A billboard on Highway 101? A door knob hanger? Bulk direct mail at about $2 a mail shot? Dr. Spock mind melds?

The world of the newspaper and magazine publishing world I knew has been vaporized. If I try, I can locate a newsstand in the local Kroger, but with the rodent problems, I think the magazine display was in a blocked aisle last week. I am not sure about newspapers. Where I live a former chef delivers the New York Times and Wall Street Journal. “Deliver” is generous because the actual newspaper in the tube averages about 40 percent success rate.

Did Google cause this? No, it was not a lone actor set on eliminating the newspaper and magazine business. Craig Newmark’s Craigslist zapped classified advertising. Other services eliminated the need for weird local newspapers. Once in the small town in Illinois in which I went to high school, a local newscaster created a local newspaper. In Louisville, we have something called Coffeetime or Coffeetalk. It’s a very thing, stunted newspaper paper printed on brown paper in black ink. Memorable but almost unreadable.

Google did what it wanted for a couple of decades, and now the old-school Web search is a dead duck. Publishers are like a couple of snow leopards trying to remain alive as tourist-filled Land Rovers roar down slushy mountain roads in Nepal.

The write up says:

Google notes that publishers can also configure Offerwall to include their own logo and introductory text, then customize the choices it presents. One option that’s enabled by default has visitors watch a short ad to earn access to the publisher’s content. This is the only option that has a revenue share… However, early reports during the testing period said that publishers saw an average revenue lift of 9% after 1 million messages on AdSense, for viewing rewarded ads. Google Ad Manager customers saw a 5-15% lift when using Offerwall as well. Google also confirmed to TechCrunch via email that publishers with Offerwall saw an average revenue uplift of 9% during its over a year in testing.

Yep, off the wall. Old-school search is dead. Google is into becoming Hollywood and cable TV. Super Bowl advertising: Yes, yes, yes. Search. Eh, not so much. Publishers, hey, we have an off the wall deal for you. Thanks, Google.

Stephen E Arnold, June 27, 2025

Teams Today, Cloud Data Leakage Tomorrow Allegations Tomorrow?

June 27, 2025

An opinion essay written by a dinobaby who did not rely on smart software .

An opinion essay written by a dinobaby who did not rely on smart software .

The creep of “efficiency” manifests in numerous ways. A simple application becomes increasingly complex. The result, in many cases, is software that loses the user in chrome trim, mud flaps, and stickers for vacation spots. The original vehicle wears a Halloween costume and can be unrecognizable to someone who does not use the software for six months and returns to find a different creature.

What’s the user reaction to this? For regular users, few care too much. For a meta-users — that is those who look at the software from a different perspective; for example, that of a bean counter — the accumulation of changes produces more training costs, more squawks about finding employees who can do the “work,” and creeping cost escalation. The fix? Cheaper or free software. “German Government Moves Closer to Ditching Microsoft: “We’re Done with Teams!” explains:

The long-running battle of Germany’s northernmost state, Schleswig-Holstein, to make a complete switch from Microsoft software to open-source alternatives looks close to an end. Many government operatives will permanently wave goodbye to the likes of Teams, Word, Excel, and Outlook in the next three months in a move to ensure independence, sustainability, and security.

The write up includes a statement that resonates with me:

Digitalization Minister Dirk Schroedter has announced that “We’re done with Teams!”

My team has experimented with most video conferencing software. I did some minor consulting to an outfit called DataBeam years and years ago. Our experience with putting a person in front of a screen and doing virtual interaction is not something that we decided to use in the lock down days. Nope. We fiddled with Sparcs and the assorted accoutrements. We tried whatever became available when one of my clients would foot the bill. I was okay with a telephone, but the future was mind-addling video conferences. Go figure.

Our experience with Teams at Arnold Information Technology is that the system balks when we use it on a Mac Mini as a user who does not pay. On a machine with a paid account, the oddities of the interface were more annoying than Zoom’s bizarre approach. I won’t comment about the other services to which we have access, but these too are not the slickest auto polishes on the Auto Zone’s shelves.

Digitalization Minister Dirk Schroedter (Germany) is quoted as saying:

The geopolitical developments of the past few months have strengthened interest in the path that we’ve taken. The war in Ukraine revealed our energy dependencies, and now we see there are also digital dependencies.

Observations are warranted:

- This anti-Microsoft stance is not new, but it has not been linked to thinking in relationship to Russia’s special action.

- Open source software may not be perfect, but it does offer an option. Microsoft “owns” software in the US government, but other countries may be unwilling to allow Microsoft to snap on the shackles of proprietary software.

- Cloud-based information is likely to become an issue with some thistles going forward.

The migration of certain data to data brokers might be waiting in the wings in a restaurant in Brussels. Someone in Germany may want to serve up that idea to other EU member nations.

Stephen E Arnold, June 27, 2025

A Business Opportunity for Some Failed VCs?

June 26, 2025

An opinion essay written by a dinobaby who did not rely on smart software .

An opinion essay written by a dinobaby who did not rely on smart software .

Do you want to open a T shirt and baseball cap with snappy quotes? If the answer is, “Yes,” I have a suggestion for you. Tucked into “Artificial Intelligence Is Not a Miracle Cure: Nobel Laureate Raises Questions about AI-Generated Image of Black Hole Spinning at the Heart of Our Galaxy” is this gem of a quotation:

“But artificial intelligence is not a miracle cure.”

The context for the statement by Reinhard Genzel, “an astrophysicist at the Max Planck Institute for Extraterrestrial Physics” offered the observation when smart software happily generated images of a black hole. These are mysterious “things” which industrious wizards find amidst the numbers spewed by “telescopes.” Astrophysicists are discussing in an academic way exactly what the properties of a black hole are. One wing of the community has suggested that our universe exists within a black hole. Other wings offer equally interesting observations about these phenomena.

The write up explains:

an international team of scientists has attempted to harness the power of AI to glean more information about Sagittarius A* from data collected by the Event Horizon Telescope (EHT). Unlike some telescopes, the EHT doesn’t reside in a single location. Rather, it is composed of several linked instruments scattered across the globe that work in tandem. The EHT uses long electromagnetic waves — up to a millimeter in length — to measure the radius of the photons surrounding a black hole. However, this technique, known as very long baseline interferometry, is very susceptible to interference from water vapor in Earth’s atmosphere. This means it can be tough for researchers to make sense of the information the instruments collect.

The fix is to feed the data into a neural network and let the smart software solve the problem. It did, and generated the somewhat tough-to-parse images in the write up. To a dinobaby, one black hole image looks like another.

But the quote states what strikes me as a truism for 2025:

“But artificial intelligence is not a miracle cure.”

Those who have funded are unlikely to buy a hat to T shirt with this statement printed in bold letters.

Stephen E Arnold, June 26, 2025

Meeker Reveals the Hurdle the Google Must Over: Can Google Be Agile Again?

June 20, 2025

Just a dinobaby and no AI: How horrible an approach?

Just a dinobaby and no AI: How horrible an approach?

The hefty Meeker Report explains Google’s PR push, flood of AI announcement, and statements about advertising revenue. Fear may be driving the Googlers to be the Silicon Valley equivalent of Dan Aykroyd and Steve Martin’s “wild and crazy guys.” Google offers up the Sundar & Prabhakar Comedy Show. Similar? I think so.

I want to highlight two items from the 300 page plus PowerPoint deck. The document makes clear that one can create a lot of slides (foils) in six years.

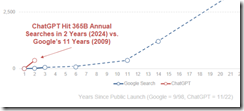

The first item is a chart on page 21. Here it is:

Note the tiny little line near the junction of the x and y axis. Now look at the red lettering:

ChatGPT hit 365 billion annual searches by Year since public launches of Google and Chat GPT — 1998 – 2025.

Let’s assume Ms. Meeker’s numbers are close enough for horse shoes. The slope of the ChatGPT search growth suggests that the Google is losing click traffic to Sam AI-Man’s ChatGPT. I wonder if Sundar & Prabhakar eat, sleep, worry, and think as the Code Red lights flashes quietly in the Google lair? The light flashes: Sundar says, “Fast growth is not ours, brother.” Prabhakar responds, “The chart’s slope makes me uncomfortable.” Sundar says, “Prabhakar, please, don’t think of me as your boss. Think of me as a friend who can fire you.”

Now this quote from the top Googler on page 65 of the Meeker 2025 AI encomium:

The chance to improve lives and reimagine things is why Google has been investing in AI for more than a decade…

So why did Microsoft ace out Google with its OpenAI, ChatGPT deal in January 2023?

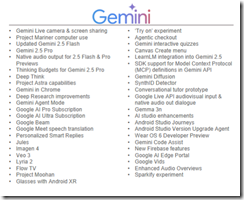

Ms. Meeker’s data suggests that Google is doing many AI projects because it named them for the period 5/19/25-5/23/25. Here’s a run down from page 260 in her report:

And what di Microsoft, Anthropic, and OpenAI talk about in the some time period?

Google is an outputter of stuff.

Let’s assume Ms. Meeker is wildly wrong in her presentation of Google-related data. What’s going to happen if the legal proceedings against Google force divestment of Chrome or there are remediating actions required related to the Google index? The Google may be in trouble.

Let’s assume Ms. Meeker is wildly correct in her presentation of Google-related data? What’s going to happen if OpenAI, the open source AI push, and the clicks migrate from the Google to another firm? The Google may be in trouble.

Net net: Google, assuming the data in Ms. Meeker’s report are good enough, may be confronting a challenge it cannot easily resolve. The good news is that the Sundar & Prabhakar Comedy Show can be monetized on other platforms.

Is there some hard evidence? One can read about it in Business Insider? Well, ooops. Staff have been allegedly terminated due to a decline in Google traffic.

Stephen E Arnold, June 20, 2025

Move Fast, Break Your Expensive Toy

June 19, 2025

An opinion essay written by a dinobaby who did not rely on smart software .

An opinion essay written by a dinobaby who did not rely on smart software .

The weird orange newspaper online service published “Microsoft Prepared to Walk Away from High-Stakes OpenAI Talks.” (I quite like the Financial Times, but orange?) The big news is that a copilot may be creating tension in the cabin of the high-flying software company. The squabble has to do with? Give up? Money and power. Shocked? It is Sillycon Valley type stuff, and I think the squabble is becoming more visible. What’s next? Live streaming the face-to-face meetings?

A pilot and copilot engage in a friendly discussion about paying for lunch. The art was created by that outstanding organization OpenAI. Yes, good enough.

The orange service reports:

Microsoft is prepared to walk away from high-stakes negotiations with OpenAI over the future of its multibillion-dollar alliance, as the ChatGPT maker seeks to convert into a for-profit company.

Does this sound like a threat?

The squabbling pilot and copilot radioed into the control tower this burst of static filled information:

“We have a long-term, productive partnership that has delivered amazing AI tools for everyone,” Microsoft and OpenAI said in a joint statement. “Talks are ongoing and we are optimistic we will continue to build together for years to come.”

The newspaper online service added:

In discussions over the past year, the two sides have battled over how much equity in the restructured group Microsoft should receive in exchange for the more than $13bn it has invested in OpenAI to date. Discussions over the stake have ranged from 20 per cent to 49 per cent.

As a dinobaby observing the pilot and copilot navigate through the cloudy skies of smart software, it certainly looks as if the duo are arguing about who pays what for lunch when the big AI tie up glides to a safe landing. However, the introduction of a “nuclear option” seems dramatic. Will this option be a modest low yield neutron gizmo or a variant of the 1961 Tsar Bomba fried animals and lichen within a 35 kilometer radius and converted an island in the arctic to a parking lot?

How important is Sam AI-Man’s OpenAI? The cited article reports this from an anonymous source (the best kind in my opinion):

“OpenAI is not necessarily the frontrunner anymore,” said one person close to Microsoft, remarking on the competition between rival AI model makers.

Which company kicked off what seems to be a rather snappy set of negotiations between the pilot and the copilot. The cited orange newspaper adds:

A Silicon Valley veteran close to Microsoft said the software giant “knows that this is not their problem to figure this out, technically, it’s OpenAI’s problem to have the negotiation at all”.

What could the squabbling duo do do do (a reference to Bing Crosby’s version of “I Love You” for those too young to remember the song’s hook or the Bingster for that matter):

- Microsoft could reach a deal, make some money, and grab the controls of the AI powered P-39 Airacobra training aircraft, and land without crashing at the Renton Municipal Airport

- Microsoft and OpenAI could fumble the landing and end up in Lake Washington

- OpenAI could bail out and hitchhike to the nearest venture capital firm for some assistance

- The pilot and copilot could just agree to disagree and sit at separate tables at the IHOP in Renton, Washington

One can imagine other scenarios, but the FT’s news story makes it clear that anonymous sources, threats, and a bit of desperation are now part of the Microsoft and OpenAI relationship.

Yep, money and control — business essentials in the world of smart software which seems to be losing its claim as the “next big thing.” Are those stupid red and yellow lights flashing at Microsoft and OpenAI as they are at Google?

Stephen E Arnold, June 19, 2025

Up for a Downer: The Limits of Growth… Baaaackkkk with a Vengeance

June 13, 2025

Just a dinobaby and no AI: How horrible an approach?

Just a dinobaby and no AI: How horrible an approach?

Where were you in 1972? Oh, not born yet. Oh, hanging out in the frat house or shopping with sorority pals? Maybe you were working at a big time consulting firm?

An outfit known as Potomac Associates slapped its name on a thought piece with some repetitive charts. The original work evolved from an outfit contributing big ideas. The Club of Rome lassoed William W. Behrens, Dennis and Donella Meadows, and Jørgen Randers to pound data into the then-state-of-the-art World3 model allegedly developed by Jay Forrester at MIT. (Were there graduate students involved? Of course not.)

The result of the effort was evidence that growth becomes unsustainable and everything falls down. Business, government systems, universities, etc. etc. Personally I am not sure why the idea that infinite growth with finite resources will last forever was a big deal. The idea seems obvious to me. I was able to get my little hands on a copy of the document courtesy of Dominique Doré, the super great documentalist at the company which employed my jejune and naive self. Who was I too think, “This book’s conclusion is obvious, right?” Was I wrong. The concept of hockey sticks that had handles to the ends of the universe was a shocker to some.

The book’s big conclusion is the focus of “Limits to Growth Was Right about Collapse.” Why? I think the idea that the realization is a novel one to those who watched their shares in Amazon, Google, and Meta zoom to the sky. Growth is unlimited, some believed. The write up in “The Next Wave,” an online newsletter or information service happily quotes an update to the original Club of Rome document:

This improved parameter set results in a World3 simulation that shows the same overshoot and collapse mode in the coming decade as the original business as usual scenario of the LtG standard run.

Bummer. The kiddie story about Chicken Little had an acorn plop on its head. Chicken Little promptly proclaimed in a peer reviewed academic paper with non reproducible research and a YouTube video:

The sky is falling.

But keep in mind that the kiddie story is fiction. Humans are adept at survival. Maslow’s hierarchy of needs captures the spirit of species. Will life as modern CLs perceive it end?

I don’t think so. Without getting to philosophical, I would point to Gottlief Fichte’s thesis, antithesis, synthesis as a reasonably good way to think about change (gradual and catastrophic). I am not into philosophy so when life gives you lemons, one can make lemonade. Then sell the business to a local food service company.

Collapse and its pal chaos create opportunities. The sky remains.

The cited write up says:

Economists get over-excited when anyone mentions ‘degrowth’, and fellow-travelers such as the Tony Blair Institute treat climate policy as if it is some kind of typical 1990s political discussion. The point is that we’re going to get degrowth whether we think it’s a good idea or not. The data here is, in effect, about the tipping point at the end of a 200-to-250-year exponential curve, at least in the richer parts of the world. The only question is whether we manage degrowth or just let it happen to us. This isn’t a neutral question. I know which one of these is worse.

See de-growth creates opportunities. Chicken Little was wrong when the acorn beaned her. The collapse will be just another chance to monetize. Today is Friday the 13th. Watch out for acorns and recycled “insights.”

Stephen E Arnold, June 13, 2025

Developers: Try to Kill ‘Em Off and They Come Back Like Giant Hogweeds

June 12, 2025

Just a dinobaby and no AI: How horrible an approach?

Just a dinobaby and no AI: How horrible an approach?

Developers, which probably extends to “coders” and “programmers”, have been an employee category of note for more than a half century. Even the esteemed Institute of Advanced Study enforced some boundaries between the “real” thinking mathematicians and the engineers who fooled around in the basement with a Stone Age computer.

Giant hogweeds can have negative impacts on humanoids who interact with them. Some say the same consequences ensue when accountants, lawyers, and MBAs engage in contact with programmers: Skin irritation and possibly blindness.

“The Recurring Cycle of ‘Developer Replacement’ Hype” addresses this boundary. The focus is on smart software which allegedly can do heavy-lifting programming. One of my team (Howard, the recipient of the old and forgotten Information Industry Association award for outstanding programming) is skeptical that AI can do what he does. I think that our work on the original MARS system which chugged along on the AT&T IBM MVS installation in Piscataway in the 1980s may have been a stretch for today’s coding wonders like Claude and ChatGPT. But who knows? Maybe these smart systems would have happily integrated Information Dimensions database with the MVS and allowed the newly formed Baby Bells to share certain data and “charge” one another for those bits? Trivial work now I suppose in the wonderful world of PL/1, Assembler, and the Basis “GO” instruction in one of today’s LLMs tuned to “do” code.

The write up points out that the tension between bean counters, MBAs and developers follows a cycle. Over time, different memes have surfaced suggesting that there was a better, faster, and cheaper way to “do” code than with programmers. Here are the “movements” or “memes” the author of the cited essay presents:

- No code or low code. The idea is that working in PL/1 or any other “language” can be streamlined with middleware between the human and the executables, the libraries, and the control instructions.

- The cloud revolution. The idea is that one just taps into really reliable and super secure services or micro services. One needs to hook these together and a robust application emerges.

- Offshore coding. The concept is simple: Code where it is cheap. The code just has to be good enough. The operative word is cheap. Note that I did not highlight secure, stable, extensible, and similar semi desirable attributes.

- AI coding assistants. Let smart software do the work. Microsoft allegedly produces oodles of code with its smart software. Google is similarly thrilled with the idea that quirky wizards can be allowed to find their future elsewhere.

The essay’s main point is that despite the memes, developers keep cropping up like those pesky giant hogweeds.

The essay states:

Here’s what the "AI will replace developers" crowd fundamentally misunderstands: code is not an asset—it’s a liability. Every line must be maintained, debugged, secured, and eventually replaced. The real asset is the business capability that code enables. If AI makes writing code faster and cheaper, it’s really making it easier to create liability. When you can generate liability at unprecedented speed, the ability to manage and minimize that liability strategically becomes exponentially more valuable. This is particularly true because AI excels at local optimization but fails at global design. It can optimize individual functions but can’t determine whether a service should exist in the first place, or how it should interact with the broader system. When implementation speed increases dramatically, architectural mistakes get baked in before you realize they’re mistakes. For agency work building disposable marketing sites, this doesn’t matter. For systems that need to evolve over years, it’s catastrophic. The pattern of technological transformation remains consistent—sysadmins became DevOps engineers, backend developers became cloud architects—but AI accelerates everything. The skill that survives and thrives isn’t writing code. It’s architecting systems. And that’s the one thing AI can’t do.

I agree, but there are some things programmers can do that smart software cannot. Get medical insurance.

Stephen E Arnold, June 12, 2025

A SundAI Special: Who Will Get RIFed? Answer: News Presenters for Sure

June 1, 2025

Just a dinobaby and some AI: How horrible an approach?

Just a dinobaby and some AI: How horrible an approach?

Why would “real” news outfits dump humanoids for AI-generated personalities? For my money, there are three good reasons:

- Cost reduction

- Cost reduction

- Cost reduction.

The bean counter has donned his Ivy League super smart financial accoutrements: Meta smart glasses, an Open AI smart device, and an Apple iPhone with the vaunted AI inside (sorry, Intel, you missed this trend). Unfortunately the “good enough” approach, like a gradient descent does not deal in reality. Sum those near misses and what do you get: Dead organic things. The method applies to flora and fauna, including humanoids with automatable jobs. Thanks, You.com, you beat the pants off Venice.ai which simply does not follow prompts. A perfect solution for some applications, right?

My hunch is that many people (humanoids) will disagree. The counter arguments are:

- Human quantum behavior; that is, flubbing lines, getting into on air spats, displaying annoyance standing in a rain storm saying, “The wind velocity is picking up.”

- The cost of recruitment, training, health care, vacations, and pension plans (ho ho ho)

- The management hassle of having to attend meetings to talk about, become deciders, and — oh, no — accept responsibility for those decisions.

I read “The White-Collar Bloodbath’ Is All Part of the AI Hype Machine.” I am not sure how fear creates an appetite for smart software. The push for smart software boils down to generating revenues. To achieve revenues one can create a new product or service like the iPhone of the original Google search advertising machine. But how often do those inventions doddle down the Information Highway? Not too often because most of the innovative new new next big things are smashed by a Meta-type tractor trailer.

The write up explains that layoff fears are not operable in the CNN dataspace:

If the CEO of a soda company declared that soda-making technology is getting so good it’s going to ruin the global economy, you’d be forgiven for thinking that person is either lying or fully detached from reality. Yet when tech CEOs do the same thing, people tend to perk up. ICYMI: The 42-year-old billionaire Dario Amodei, who runs the AI firm Anthropic, told Axios this week that the technology he and other companies are building could wipe out half of all entry-level office jobs … sometime soon. Maybe in the next couple of years, he said.

First, the killing jobs angle is probably easily understood and accepted by individuals responsible for “cost reduction.” Second, the ICYMI reference means “in case you missed it,” a bit of short hand popular with those are not yet 80 year old dinobabies like me. Third, the source is a member of the AI leadership class. Listen up!

Several observations:

- AI hype is marketing. Money is at stake. Do stakeholders want their investments to sit mute and wait for the old “build it and they will come” pipedream to manifest?

- Smart software does not have to be perfect; it needs to be good enough. Once it is good enough cost reductionists take the stage and employees are ushered out of specific functions. One does not implement cost reductions at random. Consultants set priorities, develop scorecards, and make some charts with red numbers and arrows point up. Employees are expensive in general, so some work is needed to determine which can be replaced with good enough AI.

- News, journalism, and certain types of writing along with customer “support”, and some jobs suitable for automation like reviewing financial data for anomalies are likely to be among the first to be subject to a reduction in force or RIF.

So where does that leave the neutral observer? On one hand, the owners of the money dumpster fires are promoting like crazy. These wizards have to pull rabbit after rabbit out of a hat. How does that get handled? Think P.T. Barnum.

Some AI bean counters, CFOs, and financial advisors dream about dumpsters filled with money burning. This was supposed to be an icon, but Venice.ai happily ignores prompt instructions and includes fruit next to a burning something against a wooden wall. Perfect for the good enough approach to news, customer service, and MBA analyses.

On the other hand, you have the endangered species, the “real” news people and others in the “knowledge business but automatable knowledge business.” These folks are doing what they can to impede the hyperbole machine of smart software people.

Who or what will win? Keep in mind that I am a dinobaby. I am going extinct, so smart software has zero impact on me other than making devices less predictable and resistant to my approach to “work.” Here’s what I see happening:

- Increasing unemployment for those lower on the “knowledge word” food chain. Sorry, junior MBAs at blue chip consulting firms. Make sure you have lots of money, influential parents, or a former partner at a prestigious firm as a mom or dad. Too bad for those studying to purvey “real” news. Junior college graduates working in customer support. Yikes.

- “Good enough” will replace excellence in work. This means that the air traffic controller situation is a glimpse of what deteriorating systems will deliver. Smart software will probably come to the rescue, but those antacid gobblers will be history.

- Increasing social discontent will manifest itself. To get a glimpse of the future, take an Uber from Cape Town to the airport. Check out the low income housing.

Net net: The cited write up is essentially anti-AI marketing. Good luck with that until people realize the current path is unlikely to deliver the pot of gold for most AI implementations. But cost reduction only has to show payoffs. Balance sheets do not reflect a healthy, functioning datasphere.

Stephen E Arnold, June 1, 2025

AI Can Do Your Knowledge Work But You Will Not Lose Your Job. Never!

May 30, 2025

The dinobaby wrote this without smart software. How stupid is that?

The dinobaby wrote this without smart software. How stupid is that?

Ravical is going to preserve jobs for knowledge workers. Nevertheless, the company’s AI may complete 80% of the work in these types of organizations. No bean counter on earth would figure out that reducing humanoid workers would cut costs, eliminate the useless vacation scam, and chop the totally unnecessary health care plan. None.

The write up “Belgian AI Startup Says It Can Automate 80% of Work at Expert Firms” reports:

Joris Van Der Gucht, Ravical’s CEO and co-founder, said the “virtual employees” could do 80% of the work in these firms. “Ravical’s agents take on the repetitive, time-consuming tasks that slow experts down,” he told TNW, citing examples such as retrieving data from internal systems, checking the latest regulations, or reading long policies. Despite doing up to 80% of the work in these firms, Van Der Gucht downplayed concerns about the agents supplanting humans.

I believe this statement is 100 percent accurate. AI firms do not use excessive statements to explain their systems and methods. The article provides more concrete evidence that this replacement of humans is spot on:

Enrico Mellis, partner at Lakestar, the lead investor in the round, said he was excited to support the company in bringing its “proven” experience in automation to the booming agentic AI market. “Agentic AI is moving from buzzword to board-level priority,” Mellis said.

Several observations:

- Humans absolutely will be replaced, particularly those who cannot sell

- Bean counters will be among the first to point out that software, as long as it is good enough, will reduce costs

- Executives are judged on financial performance, not the quality of the work as long as revenues and profits result.

Will Ravical become the go-to solution for outfits engaged in knowledge work? No, but it will become a company that other agentic AI firms will watch closely. As long as the AI is good enough, humanoids without the ability to close deals will have plenty of time to ponder opportunities in the world of good enough, hallucinating smart software.

Stephen E Arnold, May 30, 2025