Apple and Google Texting Bad. So What Are the Options?

December 17, 2024

This blog post flowed from the sluggish and infertile mind of a real live dinobaby. If there is art, smart software of some type was probably involved.

This blog post flowed from the sluggish and infertile mind of a real live dinobaby. If there is art, smart software of some type was probably involved.

This headline caught my attention: “FBI Warns iPhone and Android Users to Stop Texting Each Other for a Disturbing Reason.” What is that disturbing reason? According to the online article cited:

Both the FBI and US Cybersecurity and Infrastructure Security Agency are warning users to use a messaging app instead and one that uses end-to-end encryption. The warning comes amid a major cyberattack that has been called ‘unprecedented’ by the press. The attack has impacted telecommunications companies such as AT&T and Verizon. By using encrypted messaging apps, texters can keep their messages hidden from hackers. The hacking campaign has been nicknamed Salt Typhoon by Microsoft and it has not been fully solved. NBC News called it ‘one of the largest intelligence compromises in US history’.

Interesting. I recall that AT&T was not particularly chatty when information about a security compromise floated around. T-Mobile has experienced a few security issues as well. And Verizon? Same boat.

It is interesting that a sinking boat is an image several art generators refuse to produce. Anyway, Midjourney — after a lot of semantic wrangling spit out this good enough image.

After reading the article, I decided to navigate to X.com and ask Grok what the options were for a person who has muscle memory for texting folks from an Apple or Android mobile device? To use Grok, navigate to the tweeter, log in, and look for the Grok word on the displayed page. I went through these hoops slowly because when I queried Google about “how to use Grok”, the Google told me I had to sign up for a premium membership. That was wrong. No wonder Google is a service I find a bit short of the target.

Here are the services Grok (Mr. Musk’s smart software designed to compete in the Big Boy AI dodgeball contest). I quote the tweet thing’s smart output:

- “Signal: Known for its strong privacy features, Signal offers end-to-end encryption for texts, voice calls, and video calls. It’s open-source, which adds to its credibility for privacy-conscious users. Signal is available on both Android and iOS, making it a great cross-platform option.

- WhatsApp: With over 2 billion users, WhatsApp is a popular choice for cross-platform messaging. It supports text, voice, and video calls with end-to-end encryption. However, it’s worth noting that WhatsApp is owned by Meta (formerly known as Facebook), which might be a concern for privacy.

- Telegram: Offers a wide range of features like large group chats, channels, bots, and self-destructing messages. While it provides end-to-end encryption in its “Secret Chat” mode, not all chats are encrypted by default, which could be a privacy concern for some users. Telegram is available on both Android and iOS.

- Facebook Messenger: Another Meta-owned service, it’s widely used due to its integration with the social network. It supports free calls, video chats, and even has features like payment options in some regions. However, its privacy policies are often scrutinized.

- Google Chat: Part of Google Workspace, this app provides a platform for both personal and work-related communications with features like spaces for team collaboration. It’s integrated well with other Google services, although its adoption outside of Google’s ecosystem is less widespread.

- Viber: Offers free calls and messages, including stickers, GIFs, and public chats. Its user base is significant in certain regions but less so globally compared to WhatsApp or Telegram. Viber also has end-to-end encryption for calls and messages.

- Spike: Described as an email-based messaging app, Spike combines email and chat functionality, making it suitable for both personal and professional use. It’s noted for its cross-platform capabilities and privacy focus.

- SimpleX Chat: An open-source, privacy-focused chat app that doesn’t require phone numbers for registration, focusing on decentralization and user privacy.

- Element (formerly Riot.im): Based on the Matrix protocol, it’s an open-source, decentralized platform that supports secure, encrypted communication. It’s good for those looking for privacy with the flexibility of self-hosting.

- Beeper: An all-in-one messaging app that aims to unify various messaging services into one platform, although it has limitations like no official iMessage support due to Apple’s policies.”

Each of these apps is allegedly an E2EE or end-to-end encrypted messaging service. These are not presented in alphabetical order. I assume that Grok is providing the names in a rank ordering based on some criteria not explained to me. But that’s the future of smart software. Just do something and say, “Good enough.”

I noted several things about this list:

- There are no Chinese messaging apps. Is this censorship from an outfit seemingly concerned about free speech. I was surprised at the omission of QQ and WeChat with appropriate surveillance notices from Grok.

- One approach is for messaging clients to rely on Extensible Messaging and Presence Protocol. Conversations for Android and ChatSecure for iOS were at one time options.

- Inclusion of Telegram is definitely interesting because Pavel Durov has reversed course and now cooperates with law enforcement. Telegram has even played nice with anti-CSAM organizations. The about face coincided with his detainment by French authorities.

- The Grok listing does not include new and possible interesting services like PrivateLine.io., which illustrates the shallow nature of the knowledge exposed to these smart systems. (Even Yandex.com lists this service in its search results.)

- Alphabetizing lists is just not part of the 2024 world it seems.

There are some broader questions about encrypted messaging which are not addressed in the cited write up or the Grok “smart” output; for example:

- Are other messaging apps encrypted end to end or are there “special” operations which make the content visible and loggable once the user sends the message?

- Is the encryption method used by these apps “unbreakable”?

- Are the encryption methods home grown or based on easily inspected open source methods?

- What entities have access to either the logged data about a message or access to the message payload?

The alarm has been sounded about the failure of some US telecommunications companies to protect their own systems and by extension the security of their customers. But numerous questions remain with partial or no answers. Answers are, from my point of view, thin.

Stephen E Arnold, December 17, 2024

Google: More Quantum Claims; Some Are Incomprehensible Like Multiple Universes

December 16, 2024

This blog post is the work of an authentic dinobaby. No smart software was used.

This blog post is the work of an authentic dinobaby. No smart software was used.

Beleaguered Google is going all out to win a PR war against the outfits using its Transformer technology. Google should have been the de facto winner of the smart software wars. I think the president of Microsoft articulated a similar sentiment. That hurts, particularly when it comes from a person familiar with the mores and culinary delights of Mughlai cuisine. “Should have, would have, could have” — very painful to one’s ego.

I read an PR confection which spot lit this Google need to be the “best” in the fast moving AI world. I envision Google’s leadership getting hit in the back of the head by a grandmother. My grandmother did this to me when I visited her on my way home from high school. She was frail but would creep up behind me and whack me if I did not get A’s on my report card. Well, Google, let me tell you I have the memory, but the familial whack did not help me one whit.

“Willow: Google Reveals New Quantum Chip Offering Incomprehensibly Fast Processing” is a variant of the quantum supremacy claim issued a couple of years ago. In terms of technical fluff, Google is now matching the wackiness of Intel’s revolutionary Horse-something quantum innovation. But “incomprehensibly”? Come on, BetaNews.

The PR approved write up reports:

Google says that its quantum chip took less than five minutes to perform tasks that would take even the fastest supercomputers 10 septillion years. Providing some sense of perspective, Google points out that this is “a number that vastly exceeds the age of the Universe”.

Well, what do you think about that. Google is edging toward infinity, the contemplation of which drove a dude named Cantor nuts. What is the motivation for an online advertising company being sued in numerous countries for a range of alleged business behaviors to need praise for its AI achievements. The firm’s Transformer technology IS the smart software innovation.

Google re-organized in smart software division, marginalizing some heavy Google hitters. It drove out Googlers who were asking questions about baked in algorithmic bias. It cut off discussion of the synthetic data activity. It shifted the AI research to London, a convenient 11 hours away by jet and a convenient eight time zones away from San Francisco.

The write up trots out the really fast computing trope for quantum computing:

In terms of performance, there is nothing to match Willow. The “classically hardest benchmark that can be done on a quantum computer today” was demolished in a matter of minutes. This same task would take one of the fastest supercomputer available an astonishing 10,000,000,000,000,000,000,000,000 years to work through.

Scientific notation exists for a reason. Please, pass the message to Google PR, please.

Okay, another “we are better than anyone else at quantum computing.” By extension, Google is better than anyone else at smart software and probably lots of other things mere comprehensible seeking people claim to do.

And do you think there are multiple universes? Ah, you said, “No.” Google’s smart quantum stuff reports that you are wrong.

Let ‘s think about why Google has an increasing need to be held by a virtual grandmother and not whacked on the head:

- Google is simply unable to address actual problems. From the wild and crazy moon shots to the weirdness of its quantum supremacy thing, the company is claiming advances in fields essentially disconnected from the real world.

- Google believes that the halo effect of being so darned competent in quantum stuff will enhance the excellence of its other products and services.

- Google management has zero clue how to address [a] challengers to its search monopoly, [b] the negative blowback from its unending legal hassles, and [c] the feeling that it has been wronged. By golly, Google IS the leader in AI just as Google is the leader in quantum computing.

Sorry, Google, granny is going to hit you on the back of the head. Scrunch down. You know she’s there, demanding excellence which you know you cannot deliver. For a more “positive” view of Google’s PR machinations couched navigate to “The Google Willow Thing.”

There must be a quantum pony in the multi-universe stable, right?

Stephen E Arnold, December 16, 2024

The Starlink Angle: Yacht, Contraband, and Global Satellite Connectivity

December 16, 2024

This blog post is the work of an authentic dinobaby. No smart software was used.

This blog post is the work of an authentic dinobaby. No smart software was used.

I have followed the ups and downs of Starlink satellite Internet connectivity in the Russian special operation. I have not paid much attention to more routine criminal use of the Starlink technology. Before I direct your attention to a write up about this Elon Musk enterprise, I want to mention that this use case for satellites caught my attention with Equatorial Communications’ innovations in 1979. Kudos to that outfit!

“Police Demands Starlink to Reveal Buyer of Device Found in $4.2 Billion Drug Bust” has a snappy subtitle:

Smugglers were caught with 13,227 pounds of meth

Hmmm. That works out to 6,000 kilograms or 6.6 short tons of meth worth an estimated $4 billion on the open market. And it is the government of India chasing the case. (Starlink is not yet licensed for operation in that country.)

The write up states:

officials have sent Starlink a police notice asking for details about the purchaser of one of its Starlink Mini internet devices that was found on the boat. It asks for the buyer’s name and payment method, registration details, and where the device was used during the smugglers’ time in international waters. The notice also asks for the mobile number and email registered to the Starlink account.

The write up points out:

Starlink has spent years trying to secure licenses to operate in India. It appeared to have been successful last month when the country’s telecom minister said Starlink was in the process of procuring clearances. The company has not yet secured these licenses, so it might be more willing than usual to help the authorities in this instance.

Starlink is interesting because it is a commercial enterprise operating in a free wheeling manner. Starlink’s response is not known as of December 12, 2024.

Stephen E Arnold, December 16, 2024

Good Enough Junk Food: Knowledge without the Work

December 16, 2024

This write up emerged from the dinobaby’s own mind. Yes, the dinobaby used AI because this write up is about the knowledge value lost with smart software. Intellectual junk food is not the same as my mother’s overcooked chicken which she killed on her aunt’s farm. Cluck, cluck, squawk.

This write up emerged from the dinobaby’s own mind. Yes, the dinobaby used AI because this write up is about the knowledge value lost with smart software. Intellectual junk food is not the same as my mother’s overcooked chicken which she killed on her aunt’s farm. Cluck, cluck, squawk.

I plugged the Guardian’s opinion essay into You.com’s Chat GPT-4o model. You can read the original essay titled “Is Doom Scrolling Really Rotting Our Brains? The Evidence Is Getting Harder to Ignore.” The original essay is clear, reasonably well researched, and structured to speak to [a] a person who has access to the Guardian online or in (gasp!) a print form, [b] who reads the Guardian’s opinion essays, and [c] who has the ability to recognize that “brain rot” has probably affected that individual, co-workers, government leaders, children, and people who repair refrigerators.

This illustration is good enough and it was produced by the AI system Magic Studio.

Here’s the summary Chat GPT-4o spit out:

The essay critiques the decline of human intellect in the digital age, using the viral “Skibidi Toilet” video as a cultural metaphor for deteriorating focus and intellectual engagement. The Oxford English Dictionary’s 2024 word of the year, “brain rot”, reflects this phenomenon, defined as the mental degradation caused by excessive consumption of trivial online content. The essay draws on academic research to demonstrate how compulsive internet use is literally shrinking grey matter, weakening memory, shortening attention spans, and distorting cognitive functions. Studies from Harvard, Oxford, and King’s College reveal the harmful effects of media multitasking and heavy internet usage, with findings such as reduced grey matter in the brain and the risk of “digital dementia” in young people during critical developmental years.The prevalence of infinite scroll and dopamine-driven design in apps is highlighted as a deliberate tactic by tech companies to exploit human psychology for profit, creating powerful addiction loops. Former Google design ethicist Tristan Harris warns that this “race to the bottom of the brain stem” manipulates fear, outrage, and reward systems, potentially driving society to catastrophe if left unchecked.Despite the grim outlook, the essay finds hope in the growing self-awareness among younger generations, who coined the term “brain rot” and recognize its effects. Movements advocating for reduced technology use, such as switching to dumbphones or promoting smartphone-free childhoods, are gaining traction. These trends suggest the possibility of reclaiming mental clarity and focus, countering the current trajectory of cognitive decline.Ultimately, the essay positions “Skibidi Toilet” as both a symptom of modern intellectual decay and a call to action. Humanity stands at a critical juncture: to either rise above the current trends of distraction and degradation or succumb further to the “U-bend” of intellectual regression.

For what it is worth, this is a typical bite-sized knowledge cookie. No one will know if the summary is good or bad unless that person takes the time to get the original full text and compare it with this AI generated output. The informational fast food provides a sugar jolt from saving time or the summary consumer’s belief that the important information is on the money. A knowledge cookie if you will, or maybe intellectual junk food?

Is this summary good enough? From my point of view, it is just okay; that is, good enough. What else is required? Flash back to 1982, the ABI/INFORM database was a commercial success. A couple of competitors were trying to capture our customers which was tricky. Intermediaries like Dialog Information Services, ESA, LexisNexis (remember Buster and his silver jumpsuit?), among others “owned” the direct relationship with the companies that paid the intermediaries to use the commercial databases on their systems. Then the intermediaries shared some information with us, the database producers.

How did a special librarian or a researcher “find” or “know about” our database? The savvy database producers provided information to the individuals interested in a business and management related commercial database. We participated in niche trade shows. We held training programs and publicized them with our partners Dow Jones News Retrieval, Investext, Predicasts, and Disclosure, among a few others. Our senior professionals gave lectures about controlled term indexing, the value of classification codes, and specific techniques to retrieve a handful of relevant citations and abstracts from our online archive. We issued news releases about new sources of information we added, in most cases with permission of the publisher.

We did not use machine indexing. We did have a wizard who created a couple of automatic indexing systems. However, when the results of what the software in 1922 could do, we fell back on human indexers, many of whom had professional training in the subject matter they were indexing. A good example was our coverage of real estate management activities. The person who handled this content was a lawyer who preferred reading and working in our offices. At this time, the database was owned by the Courier-Journal & Louisville Times Co. The owner of the privately held firm was an early adopted of online and electronic technology. He took considerable pride in our line up of online databases. When he hired me, I recall his telling me, “Make the databases as good as you can.”

How did we create a business and management database that generated millions in revenue and whose index was used by entities like the Royal Bank of Canada to index its internal business information?

Here’s the secret sauce:

- We selected sources in most cases business journals, publications, and some other types of business related content; for example, the ANBAR management reports

- The selection of which specific article to summarize was the responsibility of a managing editor with deep business knowledge

- Once an article was flagged as suitable for ABI/INFORM, it was routed to the specialist who created a summary of the source article. At that time, ABI/INFORM summaries or “abstracts” were limited to 150 words, excluding the metadata.

- An indexing specialist would then read the abstract and assign quite specific index terms from our proprietary controlled vocabulary. The indexing included such items as four to six index terms from our controlled vocabulary and a classification code like 7700 to indicate “marketing” with addition two digit indicators to make explicit that the source document was about marketing and direct mail or some similar subcategory of marketing. We also included codes to disambiguate between a railroad terminal and a computer terminal because source documents assumed the reader would “know” the specific field to which the term’s meaning belonged. We added geographic codes, so the person looking for information could locate employee stock ownership in a specific geographic region like Northern California, and a number of other codes specifically designed to allow precise, comprehensive retrieval of abstracts about business and management. Some of the systems permitted free text searching of the abstract, and we considered that a supplement to our quite detailed indexing.

- Each abstract and index terms was checked by a control control process using people who had demonstrated their interest in our product and their ability to double check the indexing.

- We had proprietary “content management systems” and these generated the specific file formats required by our intermediaries.

- Each week we updated our database and we were exploring daily updates for our companion product called Business Dateline when the Courier Journal was broken up and the database operation sold to a movie camera company, Bell+Howell.

Chat GPT-4o created the 300 word summary without the human knowledge, expertise, and effort. Consequently, the loss of these knowledge based workflow has been replaced by a smart software which can produce a summary in less than 30 seconds.

And that summary is, from my point of view, good enough. There are some trade offs:

- Chat GPT-4o is reactive. Feed it a url or a text, and it will summarize it. Gone is the knowledge-based approach to select a specific, high-value source document for inclusion in the database. Our focus was informed selection. People paid to access the database because of the informed choice about what to put in the database.

- The summary does not include the ABI/INFORM key points and actionable element of the source document. The summary is what a high school or junior college graduate would create if a writing teacher assigned a “how to write a précis” as part of the course requirements. In general, high school and junior college graduates are not into nuance and cannot determine the pivotal information payload in a source document.

- The precise indexing and tagging is absent. One could create a 1,000 such summaries, toss them in MISTRAL, and do a search. The result is great if one is uninformed about the importance of editorial polices, knowledge-based workflows, and precise, thorough indexing.

The reason I am sharing some of this “ancient” online history is:

- The loss of quality in online information is far more serious than most people understand. Getting a summary today is no big deal. What’s lost is simply not on these individuals’ radar.

- The lack of an editorial policy, precise date and time information, and the fine-grained indexing means that one has to wade through a mass of undifferentiated information. ABI/INFORM in the 1080s delivered a handful of citations directly on point with the user’s query. Today no one knows or cares about precision and recall.

- It is now more difficult than at any other time in my professional work career to locate needed information. Public libraries do not have the money to obtain reference materials, books, journals, and other content. If the content is online, it is a dumbed down and often cut rate version of the old-fashioned commercial databases created by informed professionals.

- People look up information online and remain dumb; that is, the majority of the people with whom I come in contact routinely ask me and my team, “Where do you get your information?” We even have a slide in our CyberSocial lecture about “how” and “where.” The analysts and researchers in the audience usually don’t know so an entire subculture of open source information professionals has come into existence. These people are largely on their own and have to do work which once was a matter of querying a database like ABI/INFORM, Predicasts, Disclosure, Agricola, etc.

Sure the essay is good. The summary is good enough. Where does that leave a person trying to understand the factual and logical errors in a new book examining social media. In my opinion, people are in the dark and have a difficult time finding information. Making decisions in the dark or without on point accurate information is recipe for a really bad batch of cookies.

Stephen E Arnold, December 15, 2024

China Good, US Bad: Australia Reports the Unwelcome News

December 13, 2024

This write up was created by an actual 80-year-old dinobaby. If there is art, assume that smart software was involved. Just a tip.

This write up was created by an actual 80-year-old dinobaby. If there is art, assume that smart software was involved. Just a tip.

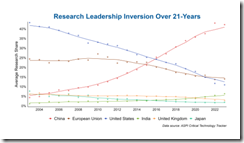

I read “Critical Technology Tracker: Two Decades of Data Show Rewards of Long-Term Investment.” The write up was issued in September 2024, and I have no confidence that much has changed. I believe the US is the leader in marketing hyperbole output. Other countries are far behind, but some are closing the gaps. I will focus on the article, and I will leave it to you to read the full report available from the ASPI Australia Web site.

The main point of this report by the Australian Strategic Policy Institute is that the US has not invested in long-term research. I am not sure how much of this statement is a surprise to those who have watched as US patents have become idea recyclers, the deterioration of US education, and the fascinating quest for big money.

The cited summary of the research reports:

The US led in 60 of 64 technologies in the five years from 2003 to 2007, but in the most recent five year period, it was leading in just seven.

I want to point out that playing online games and doom scrolling are not fundamental technologies. The US has a firm grip on the downstream effects of applied technology. The fundamentals are simply not there. AI which seems to be everywhere is little more than word probability which is not a fundamental; it is an application of methods.

The cited article points out:

The chart is easy to read. The red line heading up is China. The blue line going down is the US.

In what areas are China’s researchers making headway other than its ability to terminate some US imports quickly? Here’s what the cited article reports:

China has made its new gains in quantum sensors, high-performance computing, gravitational sensors, space launch and advanced integrated circuit design and fabrication (semiconductor chip making). The US leads in quantum computing, vaccines and medical countermeasures, nuclear medicine and radiotherapy, small satellites, atomic clocks, genetic engineering and natural language processing.

The list, one can argue, is arbitrary and easily countered by US researchers. There are patents, start ups, big financial winners, and many fine research institutions. With AI poised to become really smart in a few years, why worry?

I am not worried because I am old. The people who need to worry are the parents of children who cannot read and comprehend, who do not study and master mathematics, who do not show much interest in basic science, and are indifferent to the concept of work ethic.

Australia is worried. It is making an attempt to choke off the perceived corrosive effects of the US social media juggernaut for those under 16 years of age. It is monitoring China’s activities in the Pacific. It is making an effort to enhance its military capabilities.

Is America worried? I would characterize the attitude here in rural Kentucky as the mascot of Mad Magazine’s catchphrase, “What, me worry?”

Stephen E Arnold, December 13, 2024

ChatGPT: The New Chegg

December 13, 2024

Chegg is an education outfit. The firm has faced some magnetic interference related to its academic compass. An outfit in Australia has suggested that Chegg makes it possible for a student to obtain some assistance in order to complete certain work. Beyond Search knew AI would displace some workers and maybe even shutter some companies. But it is hard to find sympathy for this particular victim. “Chegg Is on Its Last Legs After ChatGPT Sent Its Stock Down 99%,” reports Gizmodo. So industrial scale cheating kills rich-kid cheating. Oh no.

Those of us who got our college degrees last century may not be familiar with Chegg. Writer Thomas Maxwell explains:

“[Chegg] started out in the 2000s renting out textbooks and later expanded into online study guides, and eventually into a platform with pre-written answers to common homework questions. Unfortunately, the launch of ChatGPT all but annihilated Chegg’s business model. The company for years paid thousands of contractors to write answers to questions across every major subject, which is quite a labor intensive process—and there’s no guarantee they will even have the answer to your question. ChatGPT, on the other hand, has ingested pretty much the entire internet and has likely seen any history question you might throw at it.”

Yep. The Wall Street Journal reports Chegg put off developing its own AI tools because of machine learning’s propensity for wrong answers. And rightly so. Maxwell suggests the firm might be able to make that case to “curious” students, but we agree that would be a long shot at this point. If Chegg does indeed go under, we will not mourn. But what other businesses, and the workers they support, will be next to fall?

Does the US smart software sector care if their products help students appear smarter and more diligent than they are in real life? Nope. Success in the US is, like much of the high-technology hoo-hah, creating a story and selling illusion. School education is collateral damage.

Cynthia Murrell, December 13, 2024

Autonomous AI Agents: The Next Big Thing for a Rolodex-Type Service

December 13, 2024

Are the days of large language models numbered? Yes, according to the CEO and co-founder of Salesforce. Finance site Benzinga shares, “Marc Benioff Says Future of AI Not in Bots Like ChatGPT But In Autonomous Agents.” Writer Ananya Gairola points to a recent Wall Street Journal podcast in which Benioff shared his thoughts:

“He stated that the next phase of AI development will focus on autonomous agents, which can perform tasks independently, rather than relying on LLMs to drive advancements. He argued that while AI tools like ChatGPT have received significant attention, the real potential lies in agents. ‘Has the AI taken over? No. Has AI cured cancer? No. Is AI curing climate change? No. So we have to keep things in perspective here,’ he stated. Salesforce provides both prebuilt and customizable AI agents for businesses looking to automate customer service functions. ‘But we are not at that moment that we’ve seen in these crazy movies — and maybe we will be one day, but that is not where we are today,’ Benioff stated during the podcast.”

Someday, he says. But it would seem the race is on. Gairola notes OpenAI is poised to launch its own autonomous AI agent in January. Will that company dominate the autonomous AI field, as it has with generative AI? Will the new bots come equipped with bias and hallucinations? Stay tuned.

Cynthia Murrell, December 13, 2024

FOGINT: Telegram Steps Up Its Cooperation with Law Enforcement

December 12, 2024

This short item is the work of the dinobaby. The “fog” is from Gifr.com.

This short item is the work of the dinobaby. The “fog” is from Gifr.com.

Engadget, an online news service, reported “Telegram Finally Takes Action to Remove CSAM from Its Platform.” France picks up Telegram founder Pavel Durov and explains via his attorney how the prison system works in the country. Mr. Durov, not yet in prison, posted an alleged Euro 5 million with the understanding he could not leave the country. According to Engadget, Mr. Durov is further modifying his attitude toward “free speech” and “freedom.”

The article states:

Telegram is taking a significant step to reduce child sexual abuse material (CSAM), partnering with the International Watch Foundation (IWF) four months after the former’s founder and CEO Pavel Durov was arrested. The French authorities issued 12 charges against Durov in August, including complicity in “distributing, offering or making available pornographic images of minors, in an organized group” and “possessing pornographic images of minors.”

For those not familiar with the International Watch Foundation, the organization serves as a “hub” for law enforcement and companies acting as intermediaries for those engaged in buying, leasing, selling, or exchanging illicit images or videos of children. Since 2013, Telegram has mostly been obstinate when asked to cooperate with investigators. The company has waved its hands and insisted that it is not into curtailing free speech.

After the French snagged Mr. Durov, he showed a sudden interest in cooperating with authorities. The Engadget report says:

Telegram has taken other steps since Durov’s arrest, announcing in September that it would hand over IP addresses and phone numbers in legal requests — something it fought in the past. Durov must remain in France for the foreseeable future.

What’s Telegram going to do after releasing handles, phone numbers, and possibly some of that log data allegedly held in servers available to the company? The answer is, “Telegram is pursuing its next big thing.” Engadget does not ask, “What’s Telegram’s next act?” Surprisingly a preview of Telegram’s future is unfolding in TON Foundation training sessions in Vancouver, Istanbul, and numerous other locations.

But taking that “real” work next step is not in the cards for most Telegram watchers. The “finally” is simply bringing down the curtain of Telegram’s first act. More acts are already on stage.

Stephen E Arnold, December 12, 2024

Telegram: Edging Forward in Crypto

December 12, 2024

This blog post flowed from the sluggish and infertile mind of a real live dinobaby. If there is art, smart software of some type was probably involved.

This blog post flowed from the sluggish and infertile mind of a real live dinobaby. If there is art, smart software of some type was probably involved.

Telegram wants to be the one stop app for anonymous crypto tasks. While we applaud those efforts when they related to freedom fighting or undermining bad actors, the latter also uses them and we can’t abide by that. Telegram, however, plans to become the API for crypto communication says Cryptologia in, “DWF Labs’ Listing Bot Goes Live On Telegram.”

DWF Labs is a crypto enterprise capital firm and it is launching an itemizing Bot on Telegram. The Bot turns Telegram into a bitcoin feed, because it notifies users of changes in the ten main crypto exchanges: Binance, HTX, Gate.io, Bybit, OKX, KuCoin, MEXC, Coinbase Alternate, UpBit, and Bithumb. Users can also watch foreign money pairs, launchpad bulletins, and spot and/or futures listings.

DWF Labs is on the forefront of alternative currency and financial options. It is a lucrative market:

“In a latest interview, Lingling Jiang, a Associate at DWF Labs, mentioned DWF Labs’ place on the forefront of delivering liquidity providers and forging alliances with conventional finance. By offering market-making assist and funding, Jiang stated, DWF Labs provides tasks the infrastructure needed to grasp of tokenized belongings. With the launch of the brand new Itemizing Bot, DWF Labs brings market information nearer to the retail consumer, particularly these on the Telegram (TON) community. Following the introduction of HOT, a non-custodial pockets on TON powered by Chain Signature, DWF Labs’ Itemizing Bot is one other welcome addition to the ecosystem, particularly within the mild of the latest announcement of HOT Labs, HERE Pockets and HAPI’s new joint crypto platform.”

What’s Telegram’s game for 2025? Spring Durov? Join hands with BRICS? Become the new Morgan Stanley? Father more babies?

Whitney Grace, December 12, 2024

Do Not Worry About Tomorrow. Worry About Tod”AI”

December 12, 2024

This blog post flowed from the sluggish and infertile mind of a real live dinobaby. If there is art, smart software of some type was probably involved.

This blog post flowed from the sluggish and infertile mind of a real live dinobaby. If there is art, smart software of some type was probably involved.

According to deep learning pioneer Yoshua Bengio, we may be headed for utopia—at least if one is a certain wealthy tech-bro type. For the rest of us, not so much. The Byte tells us, “Godfather of AI Warns of Powerful People who Want Humans ‘Replaced by Machines’.” He is not referring to transhumanism, which might ultimately seek to transform humans into machines. No, this position is about taking people out of the equation entirely. Except those at the top, presumably. Reporter Noor Al-Sibai writes:

“In an interview with CNBC, computer science luminary Yoshua Bengio said that members of an elite tech ‘fringe’ want AI to replace humans. The head of the University of Montreal’s Institute for Learning Algorithms, Bengio was among the public signatories of the ‘Right to Warn‘ open letter penned by leading AI researchers at OpenAI who claim they’re being silenced about the technology’s dangers. Along with famed experts Yann LeCun and Geoffrey Hinton, he’s sometimes referred to as one of the ‘Godfathers of AI.’ ‘Intelligence gives power. So who’s going to control that power?’ the preeminent machine learning expert told the outlet during the One Young World Summit in Montreal. ‘There are people who might want to abuse that power, and there are people who might be happy to see humanity replaced by machines,’ Bengio claimed. ‘I mean, it’s a fringe, but these people can have a lot of power, and they can do it unless we put the right guardrails right now.’”

Indeed. This is not the first time the esteemed computer scientist has rung AI alarm bells. As Bengio notes, those who can afford to build AI systems are very, very rich. And money leads to other types of power. Political and military power. Can government regulations catch up to these players? Only if it takes them more than five years to attain artificial general intelligence, he predicts. The race for the future of humanity is being evaluated by what’s cheaper, not better.

Cynthia Murrell, December 12, 2024