Google: Trying Hard Not to Be Noticed in a Crypto Club

December 16, 2025

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Google continues to creep into crypto. Google has interacted with ANT Financial. Google has invested in some interesting compute services. And now Google will, if “Exclusive: YouTube Launches Option for U.S. Creators to Receive Stablecoin Payouts through PayPal” is on the money, give crypto a whirl among its creators.

A friendly creature warms up in a yoga studio. Few notice the suave green beast. But one person spots a subtle touch: Pink gym shoes purchased with PayPal crypto. Such a deal. Thanks, Venice.ai. Good enough.

The Fortune article reports as actual factual:

A spokesperson for Google, which owns YouTube, confirmed the video site has added payouts for creators in PayPal’s stablecoin but declined to comment further. YouTube is already an existing customer of PayPal’s and uses the fintech giant’s payouts service, which helps large enterprises pay gig workers and contractors.

How does this work?

Based on the research we did for our crypto lectures, a YouTuber in the US would have to have a PayPal account. Google puts the payment in PayPal’s crypto in the account. The YouTuber would then use PayPal to convert PayPal crypto into US dollars. Then the YouTuber could move the US dollars to his or her US bank account. Allegedly there would be no gas fee slapped on the transactions, but there is an opportunity to add service charges at some point. (I mean what self respecting MBA angling for a promotion wouldn’t propose that money making idea?)

Several observations:

- In my new monograph “The Telegram Labyrinth” available only to law enforcement officials, we identified Google as one of the firms moving in what we call the “Telegram direction.” The Google crypto creeps plus PayPal reinforce that observation. Why? Money and information.

- Information about how Google’s activities in crypto will conform to assorted money related rules and regulations are not clear to me. Furthermore as we completed our “The Telegram Labyrinth” research in early September 2025, not too many people were thinking about Google as a crypto player. But that GOOGcoin does seem like something even the lowest level wizard at Alphabet could envision, doesn’t it?

- Google has a track record of doing what it wants. Therefore, in my opinion, more little tests, baby steps, and semi-low profile moves probably are in the wild. Hopefully someone will start looking.

Net net: Google does do pretty much what it wants to do. From gaining new training data from its mobile-to-ear-bud translation service to expanding its AI capabilities with its new silicon, the Google is a giant creature doing some low impact exercises. When the Google shifts to lifting big iron, a number of interesting challenges will arise. Are regulators ready? Are online fraud investigators ready? Is Microsoft ready?

What’s your answer?

Stephen E Arnold, December 16, 2025

Ka-Ching: The EU Cash Registers Tolls for the Google

December 16, 2025

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Thomson Reuters, the trust outfit because they say the company is, published another ka-ching story titled “Exclusive: Google Faces Fines Over Google Play if It Doesn’t Make More Concessions, Sources Say.” The story reports:

Alphabet’s Google is set to be hit with a potentially large EU fine early next year if it does not do more to ensure that its app store complies with EU rules aimed at ensuring fair access and competition, people with direct knowledge of the matter said.

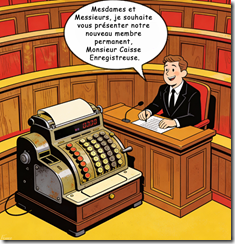

An elected EU official introduces the new and permanent member of the parliament. Thanks, Venice.ai. Not exactly what I specified, but saving money on compute cycles is the name of the game today. Good enough.

I can hear the “Sorry. We’re really, really sorry” statement now. I can even anticipate the sequence of events; hence and herewith:

- Google says, “We believe we have complied.”

- The EU says, “Pay up.”

- Google says, “Let’s go to trial.”

- The EU says, “Fine with us.”

- The Google says, “We are innocent and have complied.”

- The EU says, “You are guilty and owe $X millions of dollars. (Note: The EU generates more revenue by fining US big tech companies than it does from certain tax streams I have heard.)

- The Google says, “Let’s negotiate.”

- The EU says, “Fine with us.”

- Google negotiates and says, “We have a deal plus we did nothing wrong.”

- The EU says, “Pay X millions less the Y millions we agree to deduct based on our fruitful negotiations.”

The actual factual article says:

DMA fines can be as much as 10% of a company’s global annual revenue. The Commission has also charged Google with favoring its associated search services in Google Search, and is investigating its use of online content for its artificial intelligence tools and services and its spam policy.

My interpretation of this snippet is that the EU has on deck another case of Google’s alleged law breaking. This is predictable, and the approach does generate revenue from companies with lots of cash.

Stephen E Arnold, December 16, 2025

The EU – Google Soap Opera Titled “What? Train AI?”

December 16, 2025

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Ka-ching. That’s the sound of the EU ringing up another fine for one of its favorite US big tech outfits. Once again it is Googzilla in the headlights of a restored 2CV. Here’s the pattern:

- EU fines

- Googzilla goes to court

- EU finds Googzilla guilty

- Googzilla appeals

- EU finds Googzilla guilty

- Googzilla negotiates and says, “We don’t agree but we will pay”

- Go back to item 1.

This version of the EU soap opera is called training Gemini on whatever content Google has.

The formal announcement of Googzilla’s re-run of a fan favorite is “Commission Opens Investigation into Possible Anticompetitive Conduct by Google in the Use of Online Content for AI Purposes.” I note the hedge word “possible,” but as soap opera fans we know the arc of this story. Can you hear the cackle of the legal eagles anticipating the billings? I can.

The mythical creature Googzilla apologizes to an august body for a mistake. Googzilla is very, very sincere. Thanks, MidJourney. Actually pretty good this morning. Too bad you not consistent.

The cited show runner document says:

The European Commission has opened a formal antitrust investigation to assess whether Google has breached EU competition rules by using the content of web publishers, as well as content uploaded on the online video-sharing platform YouTube, for artificial intelligence (‘AI’) purposes. The investigation will notably examine whether Google is distorting competition by imposing unfair terms and conditions on publishers and content creators, or by granting itself privileged access to such content, thereby placing developers of rival AI models at a disadvantage.

The EU is trying via legal process to alter the DNA of Googzilla. I am fond of pointing out that beavers do what beavers do. Similarly Googzillas do exactly what the one and unique Googzilla does; that is, anything it wants to do. Why? Googzilla is now entering its prime. It has a small would on its knee. If examined closely, it is a scar that seems to be the word “monopoly”.

News flash: Filing legal motions against Googzilla will not change its DNA. The outfit is purpose built to keep control of its billions of users and keep the snoops from do gooder and regulatory outfits clueless about what happens to the [a] parsed and tagged data, [b] the metrics thereof, [c] the email, the messages, and the voice data, [d] the YouTube data, and [e] whatever data flows into the Googzilla’s maw from advertisers, ad systems, and ad clickers.

The EU does not get the message. I wrote three books about Google, and it was pretty evident in the first one (The Google Legacy) that baby Google was the equivalent of a young Maradona or Messi was going to wear a jersey with Googzilla 10 emblazoned on its comely yet spikey back.

The write up contains this statement from Teresa Ribera, Executive Vice-President for Clean, Just and Competitive Transition:

A free and democratic society depends on diverse media, open access to information, and a vibrant creative landscape. These values are central to who we are as Europeans. AI is bringing remarkable innovation and many benefits for people and businesses across Europe, but this progress cannot come at the expense of the principles at the heart of our societies. This is why we are investigating whether Google may have imposed unfair terms and conditions on publishers and content creators, while placing rival AI models developers at a disadvantage, in breach of EU competition rules.

Interesting idea as the EU and the US stumble to the side of street where these ideas are not too popular.

Net net: Googzilla will not change for the foreseeable future. Furthermore, those who don’t understand this are unlikely to get a job at the company.

Stephen E Arnold, December 16, 2025

A Thought for the New Year: Be Techy

December 16, 2025

George Orwell wrote in 1984: “Who controls the past controls the future. Who controls the present controls the past.” The Guardian published an article that embodies this quote entitled: “How Big Tech Is Creating Its Own Friendly Media Bubble To ‘Win The Narrative Battle Online’.”

Big Tech billionaire CEOs aren’t cast in the best light these days. In order to counteract the negative attitudes towards their leaders, Big Tech companies are giving their CEOs Walt Disney makeovers. If you didn’t know, Disney wasn’t the congenial uncle figure his company likes to portray him as. Walt was actually an OCD micromanager with a short temper and tendencies reminiscent of bipolar disorder. Big Tech CEOs are portraying themselves as nice guys in cozy interviews via news outlets they own or are copacetic.

Big Tech leaders are doing this because the public doesn’t trust them:

“The rise of tech’s new media is also part of a larger shift in how public figures are presenting themselves and the level of access they are willing to give journalists. The tech industry has a long history of being sensitive around media and closely guarded about their operations, a tendency that has intensified following scandals…”

The content they’re delivering isn’t that great though:

“The content that the tech industry is creating is frequently a reflection of how its elites see themselves and the world they want to build – one with less government regulation and fewer probing questions on how their companies are run. Even the most banal questions can also be a glimpse into the heads of people who exist primarily in guarded board rooms and gated compounds.”

The responses are typical of entitled, out-of-touch idiots. They’re smart in their corner of the world but can’t relate to the working individual. Happy New Year!

Whitney Grace, December 16, 2025

Do Not Mess with the Mouse, Google

December 15, 2025

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

“Google Removes AI Videos of Disney Characters After Cease and Desist Letter” made me visualize an image of Godzilla which I sometimes misspell as Googzilla frightened of a mouse; specifically, a quite angry Mickey. Why?

A mouse causes a fearsome creature to jump on the kitchen chair. Who knew a mouse could roar? Who knew the green beast would listen? Thanks, Qwen. Your mouse does not look like one of Disney’s characters. Good for you.

The write up says:

Google has removed dozens of AI-generated videos that depicted Disney-owned characters after receiving a cease and desist letter from the studio on Wednesday. Disney flagged the YouTube links to the videos in its letter, and demanded that Google remove them immediately.

What adds an interesting twist to this otherwise ho hum story about copyright viewed as an old-fashioned concept is that Walt Disney invested in OpenAI and worked out a deal for some OpenAI customers to output Disney-okayed images. (How this will work out at prompt wizards try to put Minnie, Snow White, and probably most of the seven dwarves in compromising situations I don’t know. (If I were 15 years old, I would probably experiment to find a way to generate an image involving Price Charming and the Lion King in a bus station facility violating one another and the law. But now? Nah, I don’t care what Disney, ChatGPT users, and AI do. Have at it.)

The write up says that Google said:

“We have a longstanding and mutually beneficial relationship with Disney, and will continue to engage with them,” the company said. “More generally, we use public data from the open web to build our AI and have built additional innovative copyright controls like Google-extended and Content ID for YouTube, which give sites and copyright holders control over their content.”

Yeah, how did that work out when YouTube TV subscribers lost access to some Disney content. Then, Google asked users who paid for content and did not get it to figure out how to sign up to get the refund. Yep, beneficial.

Something caused the Google to jump on a kitchen chair when the mouse said, “See you in court. Bring your checkbook.”

I thought Google was too big to obey any entity other than its own mental confections. I was wrong again. When will the EU find a mouse-type tactic?

Stephen E Arnold, December 15, 2025

How Not to Get a Holiday Invite: The Engadget Method

December 15, 2025

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Sam AI-Man may not invite anyone from Engadget to a holiday party. I read “OpenAI’s House of Cards Seems Primed to Collapse.” The “house of cards” phrase gives away the game. Sam AI-Man built a structure that gravity or Google will pull down. How do I know? Check out this subtitle:

In 2025, it fell behind the one company it couldn’t lose ground to: Google.

The Google. The outfit that shifted into Red Alert or whatever the McKinsey playbook said to call an existential crisis klaxon. The Google. Adjudged a monopoly getting down to work other than running and online advertising system. The Google. An expert in reorganizing a somewhat loosely structured organization. The Google: Everyone except the EU and some allegedly defunded YouTube creators absolutely loves. That Google.

Thanks Venice.ai. I appreciate your telling me I cannot output an image with a “young programmer.” Plugging in “30 year old coder” worked. Very helpful. Intelligent too.

The write up points out:

It’s safe to say GPT-5 hasn’t lived up to anyone’s expectations, including OpenAI’s own. The company touted the system as smarter, faster and better than all of its previous models, but after users got their hands on it, they complained of a chatbot that made surprisingly dumb mistakes and didn’t have much of a personality. For many, GPT-5 felt like a downgrade compared to the older, simpler GPT-4o. That’s a position no AI company wants to be in, let alone one that has taken on as much investment as OpenAI.

Did OpenAI suck it up and crank out a better mouse trap? The write up reports:

With novelty and technical prowess no longer on its side though, it’s now on Altman to prove in short order why his company still deserves such unprecedented levels of investment.

Forget the problems a failed OpenAI poses to investors, employees, and users. Sam AI-Man now has an opportunity to become the highest profile technology professional to cause a national and possibly global recession. Short of war mongering countries, Sam AI-Man will stand alone. He may end up in a museum if any remain open when funding evaporate. School kids could read about him in their history books; that is, if kids actually attend school and read. (Well, there’s always the possibility of a YouTube video if creators don’t evaporate like wet sidewalks when the sun shines.)

Engadget will have to find another festive event to attend.

Stephen E Arnold, December 15, 2025

The Loss of a Blue Check Causes Credibility to Be Lost

December 15, 2025

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

At first glance, either the EU is not happy with the Teslas purchased for official use, or Elon Musk is a Silicon Valley luminary that sets some regulators’ teeth on edge. I read “Elon Musk Calls for Abolition of European Union After X Fined $140 Million.” The idea of dissolving the EU is unlikely to make the folks in Brussels and Strasbourg smile with joy. I think the estimable Mr. Putin and some of his surviving advisors may break out in broad grins. But the EU elected officials are unlikely to be doing high fives. (Well, that just a guess.)

Thanks, Midjourney. Good enough.

The CNBC story says:

Elon Musk has called for the European Union to be abolished after the bloc fined his social media company X 120 million euros ($140 million) for a “deceptive” blue checkmark and lack of transparency of its advertising repository. The European Commission hit X with the ruling on Friday following a two-year investigation into the company under the Digital Services Act (DSA), which was adopted in 2022 to regulate online platforms. At the time, in a reply on X to a post from the Commission, Musk wrote, “Bulls—.”

Mr. Musk’s alleged reply is probably translated by official human translators as “Mr. Musk wishes to disagree with due respect.” Yep, that will work.

I followed up with a reluctant click on a “premium, you must pay” story from Poltico. (I think its videos on YouTube are free or the videos themselves are advertisements for Politico.) That write up is titled “X Axes European Commission’s Ad Account after €120M EU Fine.” The main idea is that Mr. Musk is responding with actions, not just words. Imagine the EU will not be permitted to advertise on X.com. My view is that the announcement sent shockwaves through the elected officials and caused consternation in the EU countries.

The Politico essay says:

Nikita Bier, X’s head of product, accused the EU executive of trying to amplify its own social media post about the fine on X by trying “to take advantage of an exploit in our Ad Composer.”

Ah, ha. The EU is click baiting on X.com.

The write up adds:

The White House has accused the rules of discriminating against U.S. companies, and the fine will likely amplify transatlantic trade tensions. U.S. Secretary of Commerce Howard Lutnick has already threatened to keep 50 percent tariffs on European exports of steel and aluminum unless the EU loosens its digital rules.

Fascinating. A government entity finds a US Silicon Valley outfit of violating one of its laws. That entity fines the Silicon Valley company. But the entire fine is little more than an excuse to [a] get clicks on Twitter (now, the outstanding X.com) and [b] the US government suggests that tariffs on certain EU exports will not be reduced.

I almost forgot. The root issue is the blue check one can receive or purchase to make a short message more “valid.” Next we jump to a fine, which is certainly standard operating procedure for entities breaking a law in the EU and then to a capitalist company refusing to sell ads and finally to a linkage to tariff rates.

I am a dinobaby, and a very uninformed dinobaby. The two stories, the blue check, the government actions and the chain of consequences reminds me of this proverb (author unknown):

“For want of a nail the shoe was lost;

For want of a shoe the horse was lost;

For want of a horse the rider was lost;

For want of a rider the message was lost;

For want of a message the battle was lost;

For want of a battle the kingdom was lost;

And all for the want of a horseshoe nail.”

I have revised the proverb:

“For want of a blue check the ads were lost;

For want of the ads, the click stream was lost;

For want of a click stream, the law suit was lost;

For want of a law suit, the fine was lost;

For want of the fine, the US influence was lost;

For want of influence, sanity was lost;

And all for the want of a blue check.”

There you go. A digital check has consequences.

Stephen E Arnold, December 15, 2025

Ah, Academia: The Industrialization Of Scientific Fraud

December 15, 2025

Everyone’s favorite technology and fiction writer Cory Doctorow coined a term that describes the so many things in society: Degradation. You can see this in big box retail stores and middle school, but let’s review a definition. Degradation is the act of eroding or weathering. Other sources say it’s the process of being crappy. In a nutshell, business processes erode online platforms in order to increase profits.

According to ZME Science, scientific publishing has become a billion dollar degradation industry. The details are in the article, “We Need To Talk About The Billion-Dollar Industry Holding Science Hostage.” It details how Luís Amaral was disheartened after he conducted a study about scientific studies. His research revealed that scientific fraud is being created faster than legitimate science.

Editors and authors are working with publishers to release fraudulent research by taking advantage of loopholes to scale academic, receive funding, and whitewash reputations. The bad science is published by paper mills that are manufacturing bad studies and selling authorship and placements in journals with editors willing to “verify” the information. Here’s an example:

“One such paper mill, the Academic Research and Development Association (ARDA), offers a window into how deeply entrenched this problem has become. Notice that they all seem to have legitimate-sounding names. Between 2018 and 2024, ARDA expanded its list of affiliated journals from 14 to 86, many of which were indexed in major academic databases. Some of these journals were later found to be hijacked — illegitimately revived after their original publishers stopped operating. It’s something we’ve seen happen often in our own industry (journalism), as bankrupt legitimate legacy newspapers have been bought by shady venture capital, only to hijack the established brands into spam and affiliate marketing magnets.”

Paper mills doubled their output therefore the scientific community’s retractions are now doubling every 3.5 years. It’s outpacing legitimate science. Here’s a smart quote that sums up the situation: “Truth seeking has always been expensive, whereas fraud is cheap and fast.”

Fraudulent science is incredibly harmful. It leads to a ripple effect that has lasting ramifications on society. An example is paper from the COVID-19 pandemic that said hydroxychloroquine was a valid treatment for the virus. It indirectly led to 17,000 fatalities.

AI makes things worse because the algorithms are trained on the bad science like a glutton on sugar. But remember, kids, don’t cheat.

Whitney Grace, December 15, 2025

Can Sergey Brin Manage? Maybe Not?

December 12, 2025

True Reason Sergey Used “Super Voting Power”

Yuchen Jin, the CTO and co-founder of Hyperbolic Labs posted on X about recent situation at Google. According topmost, Sergey Brin was disappointed in how Google was using Gemini. The AI algorithm, in fact, wasn’t being used for coding and Sergey wanted it to be used for that.

It created a big tiff. Sergey told Sundar that, “I can’t deal with these people. You have to deal with this.” Sergey still owns Google and has super voting power. Translation: he can do whatever he darn well pleases with his own company.

Yuchin Jin summed it up well:

“Big companies always build bureaucracy. Sergey (and Larry) still have super voting power, and he used it to cut through the BS. Suddenly Google is moving like a startup again. Their AI went from “way behind” to “easily #1” across domains in a year.”

Congratulations to Google making a move that other Big Tech companies knew to make without the intervention of founder.

Google would have eventually shifted to using Gemini for coding. Sergey’s influence only sped it up. The bigger question is if this “tiff” indicates something else. Big companies do have bureaucracies but if older workers have retired, then that means new blood is needed. The current new blood is Gen Z and they are as despised as Millennials once were.

I think this means Sergey cannot manage young tech workers either. He had to turn to the “consultant” to make things happen. It’s quite the admission from a Big Tech leader.

Whitney Grace, December 12, 2025

The Waymo Trip: From Cats and Dogs Waymo to the Parking Lot

December 12, 2025

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

Another dinobaby post. No AI unless it is an image. This dinobaby is not Grandma Moses, just Grandpa Arnold.

I am reasonably sure that Google Waymo offers “way more” than any other self driving automobile. It has way more cameras. It has way more publicity. Does it have way more safety than — for instance, a Tesla confused on Highway 101? I don’t know.

I read “Waymo Investigation Could Stop Autonomous Driving in Its Tracks.” The title was snappy, but the subtitle was the real hook:

New video shows a dangerous trend for Waymo autonomous vehicles.

What’s the trend?

Weeks ago, the Austin Independent School District noticed a disturbing trend: Waymo vehicles were not stopping for school buses that had their crossing guard and stop sign deployed.

Oh, Google Waymo smart cars don’t stop for school buses. Kids always look before jumping off a school and dashing across a street to see their friends or rush home to scroll Instagram. Smart software definitely can predict the trajectories of school kids. Well, probability is involved, so there is a teeny tiny chance that a smart car might do the “kill the Mission District” cat. But the chance is teeny tiny.

Thanks, Venice.ai. Good enough.

The write up asserts:

The Austin ISD has been in communication with Waymo regarding the violations, which it reports have occurred approximately 1.5 times per week during this school year. Waymo has informed them that software updates have been issued to address the issue. However, in a letter dated November 20, 2025, the group states that there have been multiple violations since the supposed fix.

What’s with these people in Austin? Chill. Listen to some country western music. Think about moving back to the Left Coast. Get a life.

Instead of doing the Silicon Valley wizardly thing, Austin showed why Texas is not the center of AI intelligence and admiration. The story says:

On Dec. 1, after Waymo received its 20th citation from Austin ISD for the current school year, Austin ISD decided to release the video of the previous infractions to the public. The video shows all 19 instances of Waymo violating school bus safety rules. Perhaps most alarmingly, the violations appear to worsen over time. On November 12, a Waymo vehicle was recorded violating a law by making a left turn onto a street with a school bus, its stop signs and crossbar already deployed. There are children in the crosswalk when the Waymo makes the turn and cuts in front of them. The car stops for a second then continues without letting the kids pass.

Let’s assume that after 16 years of development and investment, the Waymo self driving software intelligence gets an F in school bus recognition. Conjuring up a vehicle that can doddle down 101 at rush hour driven by a robot is a Silicon Valley inspiration. Imagine. One can sit in the automobile, talk on the phone, fiddle with a laptop, or just enjoy coffee and a treat from Philz in peace. Just ignore the imbecilic drivers in other automobiles. Yes, let’s just think it and it will become real.

I know the idea sounds great to anyone who has suffered traffic on 101 or the Foothills, but crushing the Mission District stray cat is just a warm up. What type of publicity heat will maiming Billy or Sally whose father might be a big time attorney who left Seal Team 6 to enforce and defend city, county, state, and federal law? Cats don’t have lawyers. The parents of harmed children either do or can get one pretty easily.

Getting a lawyer is much easier than delivering on a dream that is a bit of nightmare after 16 years and an untold amount of money. But the idea is a good one. Sort of.

Stephen E Arnold, December 12, 2025