AI Will Kill, and People Will Grow Accustomed to That … Smile

October 30, 2025

This essay is the work of a dumb dinobaby. No smart software required.

This essay is the work of a dumb dinobaby. No smart software required.

I spotted a story in SFGate, which I think was or is part of a dead tree newspaper. What struck me was the photograph (allegedly not a deep fake) of two people looking not just happy. I sensed a bit of self satisfaction and confidence. Regardless, both people gracing “Society Will Accept a Death Caused by a Robotaxi, Waymo Co-CEO Says.” Death, as far back as I can recall as an 81-year-old dinobaby, has never made me happy, but I just accepted the way life works. Part of me says that my vibrating waves will continue. I think Blaise Pascal suggested that one should believe in God because what’s the downside. Go, Blaise, a guy who did not get to experience an an accident involving a self-driving smart vehicle.

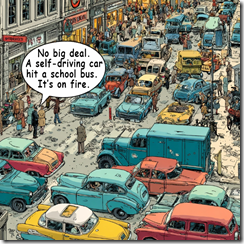

A traffic jam in a major metro area. The cause? A self-driving smart vehicle struck a school bus. But everyone is accustomed to this type of trivial problem. Thanks, MidJourney. Good enough like some high-tech outfits’ smart software.

But Waymo is a Google confection dating from 2010 if my memory is on the money. Google is a reasonably big company. It brokers, sells, and creates a market for its online advertising business. The cash spun from that revolving door is used to fund great ideas and moon shots. Messrs. Brin, Page, and assorted wizards had some time to kill as they sat in their automobiles creeping up and down Highway 101. The idea of a self-driving car that would allow a very intelligent, multi-tasking driver to do something productive than become a semi-sentient meat blob sparked an idea. We can rig a car to creep along Highway 101. Cool. That insight spawned what is now known as Waymo.

An estimable Google Waymo expert found himself involved in litigation related to Google’s intellectual property. I had ignored Waymo until the Anthony Levandowski founded a company, sold it to Uber, and then ended up in a legal matter that last from 2017 to 2019. Publicity, I have heard, whether positive or negative, is good. I knew about Waymo: A Google project, intellectual property, and litigation. Way to go, Waymo.

For me, Waymo appears in some social media posts (allegedly actual factual) when Waymo vehicles get trapped in a dead end in Cow Town. Sometimes the Waymos don’t get out of the way of traffic barriers and sit purring and beeping. I have heard that some residents of San Francisco have [a] kicked, [b] sprayed graffiti on Waymos, and/or [c] put traffic cones in certain roads to befuddle the smart Google software-powered vehicles. From a distance, these look a bit like something from a Mad Max motion picture.

My personal view is that I would never stand in front of a rolling Waymo. I know that [a] Google search results are not particularly useful, [b] Google’s AI outputs crazy information like glue cheese on pizza, and [c] Waymo’s have been involved in traffic incidents which cause me to stay away from Waymos.

The cited article says that the Googler said in response to a question about a Waymo hypothetical killing of a person:

“I think that society will,” Mawakana answered, slowly, before positioning the question as an industry wide issue. “I think the challenge for us is making sure that society has a high enough bar on safety that companies are held to.” She said that companies should be transparent about their records by publishing data about how many crashes they’re involved in, and she pointed to the “hub” of safety information on Waymo’s website. Self-driving cars will dramatically reduce crashes, Mawakana said, but not by 100%: “We have to be in this open and honest dialogue about the fact that we know it’s not perfection.” [Emphasis added by Beyond Search]

My reactions to this allegedly true and accurate statement from a Googler are:

- I am not confident that Google can be “transparent.” Google, according to one US court is a monopoly. Google has been fined by the European Union for saying one thing and doing another. The only reason I know about these court decisions is because legal processes released information. Google did not provide the information as part of its commitment to transparency.

- Waymos create problems because the Google smart software cannot handle the demands of driving in the real world. The software is good enough, but not good enough to figure out dead ends, actions by human drivers, and potentially dangerous situations. I am aware of fender benders and collisions with fixed objects that have surfaced in Waymo’s 15 year history.

- Self driving cars specifically Waymo will injure or kill people. But Waymo cars are safe. So some level of killing humans is okay with Google, regulators, and the society in general. What about the family of the person who is killed by good enough Google software? The answer: The lawyers will blame something other than Google. Then fight in court because Google has oodles of cash from its estimable online advertising business.

The cited article quotes the Waymo Googler as saying:

“If you are not being transparent, then it is my view that you are not doing what is necessary in order to actually earn the right to make the roads safer,” Mawakana said. [Emphasis added by Beyond Search]

Of course, I believe everything Google says. Why not believe that Waymos will make self driving vehicle caused deaths acceptable? Why not believe Google is transparent? Why not believe that Google will make roads safer? Why not?

But I like the idea that people will accept an AI vehicle killing people. Stuff happens, right?

Stephen E Arnold, October 30, 2025